Experts Provide Early Analysis of the American Privacy Rights Act

Justin Hendrix, Ben Lennett / Apr 11, 2024

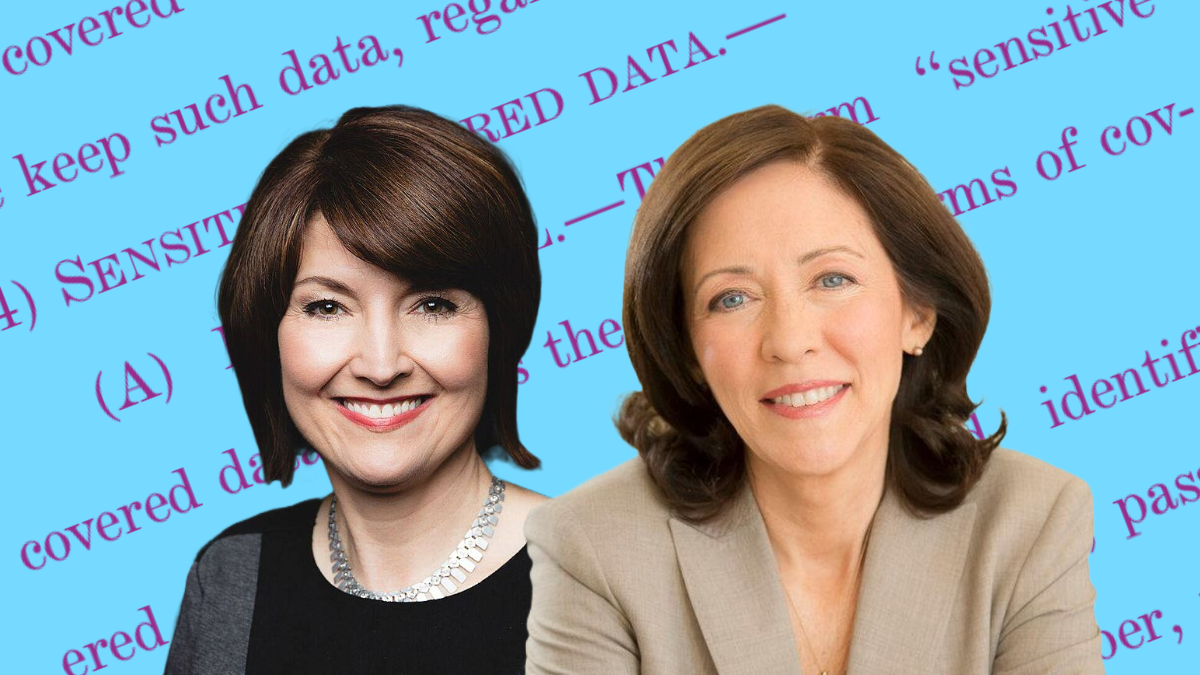

Rep. Cathy McMorris Rodgers (R-WA), Sen. Maria Cantwell (D-WA)

On Sunday, April 7, a draft of a bill titled the American Privacy Rights Act (APRA) was released by two lawmakers from Washington state, Senator Maria Cantwell (D-WA) and Rep. Cathy McMorris Rodgers (R-WA). “This bipartisan, bicameral draft legislation is the best opportunity we’ve had in decades to establish a national data privacy and security standard that gives people the right to control their personal information,” said Rep. Rodgers, who chairs the House Energy & Commerce Committee, and Sen. Cantwell, who chairs the Senate Commerce Committee, in a joint statement.

The collaboration between the two lawmakers is notable in part because Sen. Cantwell was one forceful opponent of a previous attempt at comprehensive privacy legislation that Rep. McMorris Rodgers championed. Previously, Rep. McMorris Rodgers steered the American Data Privacy and Protection Act (ADPPA) through the House Energy & Commerce Committee she chairs, advancing it out of the committee by a 53-2 vote. But the bill stalled in the House as California Democrats raised concerns about whether it would preempt that state’s privacy law. Sen. Cantwell opposed it at the time, arguing the legislation’s enforcement measures were weak.

RELATED READING:

- The American Privacy Rights Act of 2024 Explained: What Does the Proposed Legislation Say, and What Will it Do?

- Can the American Privacy Rights Act Accomplish Data Minimization?

- Unclear Protections in the American Privacy Rights Act Not Worth Broad Preemption

Tech Policy Press invited a variety of experts and organizations to share their early analysis of the APRA. Respondents included the Electronic Privacy Information Center (EPIC), the Center for Democracy & Technology (CDT), the Future of Privacy Forum, the Open Technology Institute, Demand Progress, Public Knowledge, the Check My Ads Institute, and Data & Society. Their responses are lightly edited for clarity and style.

Tech Policy Press invites other perspectives on the proposed legislation; learn more about how to contribute here.

The landscape has shifted since 2022; this Congress must meet the moment

Alan Butler, Executive Director and President, Electronic Privacy Information Center

Caitriona Fitzgerald, Deputy Director, Electronic Privacy Information Center

It’s 2024, and we find ourselves once again tracking work in Congress on a comprehensive privacy bill. As we review the APRA and give input as it moves forward in Congress, we will be focusing specifically on the strength of the data minimization rules and the ability to enforce those rules under the bill. As we said in 2022, getting strong privacy protections for all Americans is a top priority, but the landscape has shifted significantly since 2022 and Congress needs to meet the moment if it is going to set a federal standard.

Americans are demanding real protections for their data and states have passed strong privacy laws. But shifting away from a commercial surveillance infested internet ecosystem is a major undertaking. Experience shows that this requires clear rules and guidance, coordination across jurisdictions, and a robust enforcement capacity. The APRA’s substantive and enforcement provisions should be read with these issues in mind.

So, how does enforcement look under APRA? The bill has a three-tiered enforcement structure similar to what we saw in the ADPPA (the FTC, states, and privacy actions), but there have been some significant changes as well. Data minimization rules can’t be enforced through the private right of action; the California Privacy Protection Agency’s work could be significantly disrupted by preemption; and the new FTC privacy bureau would need substantial funding and stronger enforcement tools. There is a lot to analyze, and we look forward to working with Congress to ensure strong, comprehensive privacy protections for all Americans.

APRA’s advertising definitions need work

Nathalie Maréchal, PhD, Co-Director, Privacy & Data Project, Center for Democracy & Technology

If APRA becomes law, one industry that will be profoundly transformed is digital advertising, which relies heavily on data collected from around the internet (and beyond) for both targeting (matching ad content to people) and measurement (assessing the effectiveness of campaigns). This could be a good thing: as I’ve written repeatedly over the years, today’s online ad ecosystem leaves its most important stakeholders—people, publishers, and advertisers themselves—dissatisfied, while enriching ad-tech intermediaries and major tech platforms in an increasingly concentrated market. Comprehensive federal privacy legislation is an important opportunity to reinvent digital advertising in a way that respects privacy and other rights and bolsters democracy, while supporting legitimate commerce and content creation.

As always, the devil is in the details, and many of APRA’s ad-tech related definitions are either inadequate or altogether missing. Take contextual advertising, for example: it lacks a definition and instead is relegated to a brief description in the exclusions list in the definition for targeted advertising. That definition also excludes first-party advertising (“based on an individual’s visit to or use of a website or online service that offers a product or service that is related to the subject of the advertisement”). APRA seems to use “targeted advertising” to mean only “third-party behavioral advertising,” which is incomplete: ads can also be targeted based on first-party behavioral data, context, characteristics, interests, or something else. It makes sense to treat ads differently based on how they are matched with audiences, but that requires more detailed definitions.

Aside from my issues about the word “targeting,” a more serious problem is that APRA’s description of contextual advertising (“when an advertisement is displayed online based on the content of the webpage or online service on which the advertisement appears”) fails to address crucial questions that will determine its future economic viability. What degree of geographic targeting should be permitted, if any, and how should a user’s location be determined at the technical level? How does the concept of “context,” seemingly defined with static web pages in mind, translate to podcasting, streaming video, or social networking sites that have “feeds” that change for different people and at different times? These are answerable questions, fortunately. Stay tuned for analysis and recommendations from CDT.

The APRA’s approach to data minimization

Jordan Francis, Policy Counsel, Future of Privacy Forum

The American Privacy Rights Act’s (APRA) novel approach to data minimization reflects an emerging perspective shift in US privacy law—increasingly, policymakers are experimenting with placing default limits on how personal information can be collected, used, and shared instead of requiring individuals to exercise privacy rights on a burdensome case-by-case basis. Under the APRA discussion draft, both covered entities and service providers (a notable change from many existing frameworks) would be barred from collecting, processing, retaining, or transferring covered data “beyond what is necessary, proportionate, and limited to provide or maintain . . . a specific product or service requested by the individual,” or to effect a specifically enumerated “permitted purpose,” such as protecting data security. APRA would also create affirmative express consent requirements for transferring sensitive covered data to third parties and for collecting, processing, retaining, or transferring biometric and genetic information.

APRA’s data minimization requirements diverge from the approaches taken under state comprehensive privacy laws as well as the federal bill on which the discussion draft is based. APRA’s predecessor from 2022, the ADPPA, was notable in part due to its novel two-tier framework for data minimization. Under the ADPPA, covered entities would have been permitted to collect, process, and transfer covered data only where doing so was “limited to what is reasonably necessary and proportionate” to “provide or maintain a specific product or service requested by” an individual or to effect an enumerated “permissible purpose.” For sensitive data, ADPPA would have limited collection and processing to what is “strictly necessary” to provide or maintain a product or service and prohibited transfers to third parties without affirmative consent. ADPPA’s framework for data minimization has become influential in the states and is reflected in privacy legislation under consideration in Maine, Vermont, and Michigan, as well as the recently passed Maryland Online Data Privacy Act, a bill whose supporters are calling one of the strongest comprehensive privacy laws in the nation. The APRA discussion draft largely preserves the ADPPA’s data minimization standard, but it includes key distinctions that would make the proposal both more and less restrictive in different ways, such as rolling back ADPPA’s “strictly necessary” standard for sensitive data processing in favor of consent requirements.

The APRA discussion draft’s data minimization standard also leaves some ambiguity as to the bounds of what data practices are permissible and under what circumstances. For example, the APRA discussion draft is unclear on if the consent requirements for sensitive data apply in addition to or in place of the general data minimization rule for covered data. In particular, data collected from browsing activity across websites commonly used for targeted advertising appears to be simultaneously subject to opt-in rights (with respect to sensitive covered data transfers in Section 3), opt-out rights (with respect to targeted advertising in Section 6), and default prohibitions on use (with respect to permitted purposes in Section 3), making the proposal’s potential impact on the online advertising ecosystem unclear. Furthermore, a strict reading of the proposed minimization requirements of the APRA discussion draft could curtail certain socially beneficial practices, such as processing for research carried out in the public interest.

While APRA could represent a major step forward for the protection of personal data under US privacy law, lawmakers will likely need to further clarify and refine the framework's novel privacy rights and duties as it advances, particularly with respect to data minimization, permissible purposes, and sensitive data.

The realities of compromise

David Morar, PhD, Senior Policy Analyst, Open Technology Institute

It would be an understatement to say I’m excited about credibly discussing the potential passage of federal comprehensive privacy legislation—again. For context, I’ve argued for passing ADPPA at every single public event at which I’ve spoken since the summer of 2022. Given the need to ensure bipartisan support, the discussion draft of the APRA—much like the ADPPA—is less a reflection of a comprehensive regulatory regime and more one of a negotiated compromise, and we need to get comfortable with that reality, even while we push for a different result of said compromise.

My perpetual optimism about eventually getting a bill like this passed is usually tempered by the understanding that we likely won’t get too many chances like this, and we should not waste a second one. What is clear is that while the bill name and text may be new, we are not having a new conversation about federal legislation: the APRA is rooted in some of the same concepts as the ADPPA and reflects, among other things, an interim compromise on principles for which civil society has long advocated.

The key word in understanding the APRA is “interim,” since we are dealing with the discussion draft. The current form of the APRA has loosely different answers to the same legislative questions that were addressed by the ADPPA, but it does not mean it will stay that way. The APRA is a credible compromise proposal that deserves urgent engagement. We need to ensure that it can pass with thoughtful modifications that ensure the bill is as effective as possible, especially for communities and individuals most disproportionately affected by privacy violations. Let’s roll up our sleeves and get to work.

The scope of state preemption is too broad

Emily Peterson-Cassin, Director, Corporate Power, Demand Progress

The scope of preemption here is broad, and that is a significant drawback. Since the last major Congressional privacy push two years ago, states have shown that they can and will legislate in this area to protect their residents. And, states can act quickly in response to changing technology in a way that Congress simply cannot. This bill sets a ceiling on how much protection states can offer their residents, when it should be setting a floor.

It's great that Congress is taking a look at this, but advocates shouldn't rush to settle for something inferior just because it is so long overdue. We need to consider whether these protections are truly what we need at this moment, and whether they are strong enough and flexible enough to continue to protect us until Congress chooses to act again. At this moment, the answer is no.

Do not remove the FCC’s authority over communications services

Sara Collins, Director of Government Affairs, Public Knowledge

The death of a comprehensive privacy law has been greatly exaggerated. While the American Data Privacy Protection Act (ADPPA) may be no more, the American Privacy Rights Act (APRA) lives as its successor. As all of us are reviewing this bill in light of two more years of state privacy laws passing, new technology coming into play, and deep concerns about how kids use the internet – at Public Knowledge, we are also concerned about something that seems to have fallen under the radar of most other civil society organizations.

The Federal Communications Commission has authority over communications networks, and the agency is about to restore its authority over broadband, as well; unfortunately, the APRA appears to preempt the entirety of the Communications Act. This overbroad preemption will have sweeping effects, jeopardizing the reliability of our communications networks, competition in voice, and important consumer protection goals like net neutrality, transparency in billing, and anti-discrimination provisions.

To name just a few, the APRA would preempt the recently passed Safe Connections Act (protecting survivors of domestic abuse); the TRACED Act (mandating caller ID verification and authorizing action against robocallers); the Communications Assistance for Law Enforcement Act (CALEA); and every other provision potentially relating to the regulation of phone numbers, call routing, and cybersecurity. It would even prevent the FCC from readopting net neutrality rules that prohibit blocking or degrading applications. All of these rules involve the “collection, processing, retention, transfer, or security of covered data.” Indeed, the preemption language is so broad its full scope is difficult to determine. While the ADPPA was not perfect, it had a much narrower preemption of the FCC’s authority.

Public Knowledge would prefer that the FCC not be preempted at all. It is an important regulator that has done significant work in protecting Americans’ privacy. It should be treated like the regulators that enforce Gramm Leach Bliley (i.e., Financial Privacy rules) and HIPAA (i.e., Health Privacy rules), and not be displaced by the APRA. At the very least, the APRA should narrow its preemption to cover only those parts of the Communications Act that directly deal with privacy, rather than preempt the Communications Act entirely. The unintended consequences of such preemption language would be disastrous.

Too many exemptions and loopholes

Arielle Garcia, Director of Intelligence, Check My Ads

Sarah Kay Wiley, Director of Policy, Check My Ads

It is long past due for America to have comprehensive privacy legislation, and we applaud Senator Cantwell and Representative McMorris Rodgers for bringing privacy to the foreground during an election year.

However, as the ad-tech watchdog, we are concerned with potential loopholes that, if enacted, could allow the digital advertising industry to continue its unchecked trade in consumers’ sensitive personal data and put more power into the hands of dominant tech companies — harming individuals, advertisers, and democracy.

1. Ad-tech companies and data brokers could exploit “service provider” exemptions to undermine the privacy of people’s web browsing data

While APRA says it will require opt-in consent for sharing sensitive web browsing data with third parties, there is a big loophole that ad-tech companies are all too familiar with: consent isn’t needed to share this data with “service providers.” We have already seen ad-tech companies and data brokers attempt to position themselves as service providers to avoid adhering to consumer choice and opt-in requirements in the past. This loophole is troubling and we hope to see it remedied to keep people’s intimate online activity data out of the hands of shady middlemen and dodgy data brokers.

2. The “deidentified data” loophole is the lifeblood of surveillance advertising

Ad-tech companies have a nasty habit of playing fast-and-loose with what they consider to be “deidentified” data while openly bragging about their ability to target, track, and profile people at an individual level. The way that the ad-tech industry uses unique identifiers - to track individuals’ every move across sites, apps, services, and devices over time for behavioral advertising - is incompatible with the concept of de-identification.

For example, a recent report by Open Rights Group and Cracked Labs explains how one large ad-tech company, LiveRamp, assigns a specific “RampID” to each person that is tied to their name, home address, device IDs, and the IDs held by other data brokers, social, and ad-tech platforms. This RampID is used to let ad-tech companies and data brokers “recognize, track, follow, profile and target people” - but LiveRamp has called it “pseudonymized,” “deidentified,” and even “anonymous.” To rein in these deceptive practices and give consumers meaningful choice over who gets to know and use their most intimate details, it must be clear that ad-tech companies can no longer use these word games to circumvent consumers’ opt-out rights.

3. We can’t let the Privacy-Enhancing Technologies pilot program be weaponized by big tech bullies

While the Privacy-Enhancing Technologies (”PET”) pilot program seems positive, ad-tech companies have established a track record of privacy-washing without taking meaningful action to improve consumer privacy. Worse, big tech companies like Google, Apple, and Meta have been defining “privacy” in alignment with their own commercial interests and weaponizing PETs and privacy rhetoric to bolster their dominance to the detriment of advertisers, consumers, and publishers. For the PET pilot program to incentivize true privacy enhancements, it is integral that adequate governance and resourcing are established to ensure truly independent auditing and insulate the program from being undermined by big tech lobbying and influence.

Advances Protections for Sensitive Data, But Gaps in Enforcement

Melinda Sebastian, PhD, Senior Policy Analyst, Data & Society

The American Privacy Rights Act of 2024 is very ambitious and similar in spirit to Europe's GDPR — but creates a much bigger umbrella of what is considered sensitive data. Because of that, if passed, the bill would represent the most comprehensive approach to privacy on the federal level, and it also includes a requirement for privacy impact assessments. These could be a positive step towards accountability, achieved through standardized reporting on industry actions with consumer data. Yet policymakers must also consider whether the current FTC has the capacity to execute the bill’s many directives, including establishing a new bureau that is "...comparable in structure, size, organization, and authority to the existing bureaus within the Commission related to consumer protection and competition."

There are gaps in how the bill describes enforcement capacity on the government side, while offering more details on the industry capacity. The bill outlines that FTC is the major enforcing agency, with support from state attorneys general, and the bill also gives individuals the ability to sue with a private right of action. Although the bill details a Victims' Relief Fund, and a GAO study to address hiring costs for state attorneys general, it does not detail an increased budget or means of funding for an expanded FTC. While the bill is very clear on mission, scope, and timeline, it is far less so when it comes to the practicalities of funding a new bureau. In conceiving of capacity on the industry side of things, the bill’s language about exemptions makes clear that for smaller companies, including nonprofits, capacity would not be an issue of budgetary concern. This is because the bill targets larger companies or "covered entities" who do have the capacity to execute the directives of this bill. This puts the majority of the burden to comply with a comprehensive set of standards squarely on the industry side. There is certainly capital and people power to make that happen, and that offers a promising potential for change.

It is evident that a great deal of effort went into creating a bill that comprehensively protects consumers and seemingly also intends to uphold existing "real world" civil rights protections in a digital space. What is less clear from the bill in its current form is where the funding to make this possible would come from. More detail on that (as the bill does provide on the Victims Relief Fund, for example) will be necessary to understand the specifics of how these new positions would be funded.

Authors