India’s Hate Speech Crisis and the Myth of Neutral Platforms

David Sathuluri / Feb 24, 2026

Activists with posters attend a rally during a "One Billion Rising" event, a global campaign calling for an end to violence against women and girls, in Kolkata, India, Tuesday, Feb. 24, 2026. (AP Photo/Bikas Das)

On January 13, 2026, the India Hate Lab, a project of the Center for the Study of Organized Hate (CSOH), released its annual report on hate speech events in India for 2025. It documented 1,319 verified hate speech events targeting Muslims and Christians across 21 states, one union territory, and the National Capital Territory of Delhi. An average of four events a day were reported, which is a 13 percent increase from 2024 and a 97 percent from 2023. More than 1,200 of these events left a digital trace, including videos from 1,278 incidents that were first shared or livestreamed on social media platforms, with Facebook accounting for 942 initial uploads, followed by YouTube, Instagram and X.

This escalation also came after a year in which platforms dialed back their own guardrails. On January 7, 2025, Meta announced an overhaul of its content moderation system, replacing third-party fact checkers in the US with crowdsourced Community Notes, relaxing hateful conduct policies, and narrowing automated scanning to fewer categories of content. Across the industry, trust and safety teams were being thinned out or restructured, with companies like X and TikTok cutting moderation staff and shifting more responsibility to automation.

While India just wrapped the India AI Impact Summit 2026 under the banner of “Safe and Trusted AI,” the India Hate Lab’s findings show how, in practice, existing social media systems—with their recommendation engines, live-streaming tools, and group features—continue to function as a core infrastructure for organized hate. In India, as in other nations, what we see emerging is just not a content moderation problem but a systematic risk.

The anatomy of coordinated hate

The CSOH report offers a rare quantitative window into how Hindu nationalist networks have weaponized social media as mobilization infrastructure. Of the 1,318 documented events, 88 percent occurred in states governed by India's ruling Bharatiya Janata Party and its coalition partners. The most prolific organizers include the Vishwa Hindu Parishad (VHP), and its militant youth wing– Bajrang Dal, a Hindu nationalist groups repeatedly linked to anti-muslim, anti-christian and minority violence which orchestrated 289 events, systematically livestreaming speeches that called for violence, propagated conspiracy theories about Muslim "land jihad" and "love jihad," and urged economic boycotts. These weren't isolated incidents but were nodes in a coordinated network, amplified through granular social media infrastructure that mirrors India's administrative geography down to the district and village level.

When a terror attack struck Pahalgam in April 2025, this hate machinery activated instantly. Within 16 days, India Hate Lab documented 98 hate speech rallies nationwide, many featuring speeches urging Hindus to "pick up weapons" or calling Muslims "vermin" that must be "burned" or “send them to Pakistan.” Videos circulated within minutes. Facebook groups with hundreds of thousands of members shared the content. Instagram accounts representing local Bajrang Dal units cross-posted to state and national networks using Meta's Collab feature. YouTube channels livestreamed the events. The algorithmic logic of engagement—likes, shares, comments, ensured the most inflammatory content traveled furthest.

This isn't what Meta calls 'Coordinated Inauthentic Behavior' (CIB), that term specifically applies to networks where fake accounts are central to the operation. The accounts mobilizing hate speech in India are real, verified, and often tied to prominent political figures and organizations. What makes the activity systematic is the deliberate exploitation of platform features like live streaming, recommendation algorithms, group mechanics, cross-posting tools to manufacture virality for violent rhetoric. By this, I mean real, named infrastructure like Party IT cells running WhatsApp trees from Delhi down to booth level groups, district tagged facebook pages for BJP and VHP units, and Instagram handles for local Bajrang Dal people that cross-post the same hate clips within minutes. For example, a speech in a small town can move/circulate through that network– national page, state page, district group, booth WhatsApp, in the time it takes people to scroll their feeds. The coordination happens through authentic infrastructure like WhatsApp groups hierarchies mapped to India's administrative geography, Facebook pages run by official BJP and VHP units, Instagram accounts representing local Bajrang Dal chapters. This is, in some ways, more concerning than classical CIB. There is no deception about identity. The hate is operating in plain sight, amplified by platform design choices that reward engagement regardless of content.

When regulation meets reality

India's regulatory response has centered on takedown velocity. The Information Technology Rules of 2021 require platforms to remove content within 36 hours of receiving government orders. Platforms that fail to comply lose safe harbor protections under Section 79 of the IT Act, exposing them to liability for user-generated content. In November 2025, amendments restricted takedown authority to senior officials, ostensibly to prevent arbitrary censorship. By that point, India had already established itself as the global leader in YouTube video removals. According to YouTube's transparency data, India logged 2.9 million video takedowns in the final quarter of 2024 alone, the highest of any country globally and nearly 30 percent of all videos removed worldwide.

But velocity doesn't address coordination. The IT Rules treat hate speech as individual content violations—discrete posts to be identified, flagged, and removed. They say nothing about the organizational networks behind the content, the infrastructure that enables simultaneous uploads across platforms, or the algorithmic systems that reward outrage with distribution. A speech can be taken down from one account and immediately reappear through many others; a page can be banned and then reconstituted under a slightly different name.

The case of BJP legislator T. Raja Singh illustrates the gap starkly. Facebook and Instagram banned him in 2020 under Meta’s Dangerous Individuals and Organizations policy. Yet in the 2024 Hate Speech report documented that he still had a strong presence across Meta’s platforms, with over 1.1 million followers and members through proxy accounts, fan pages and supporter groups that routinely promoted his events and amplified his hate speeches. Only after that report did Meta quietly removed three large Facebook groups and four Instagram accounts tied to him, but the underlying enforcement problem remained that the rules are designed to react to individual pages, not to the coordinated ecosystem that springs up to keep a banned politician’s content circulating.

This is the structural mismatch at the heart of India's regulatory approach. Content takedowns address symptoms. Systemic risk requires diagnosing the disease.

The DSA model: seeing the system

Contrast this with the European Union's Digital Services Act (DSA), which entered full effect in 2024. For Very Large Online Platforms those with more than 45 million users in the EU, the DSA imposes obligations that go beyond individual content. Article 34 requires annual risk assessments covering how platform design, algorithms, and operational choices create systemic risks to fundamental rights, public security, and electoral processes. Article 35 mandates mitigation measures proportionate to identified risks, which could include redesigning recommendation systems, adjusting content distribution logic, or strengthening enforcement in high-risk contexts. Article 37 requires independent annual audits to verify compliance. Penalties for failure can reach 6 percent of global annual revenue.

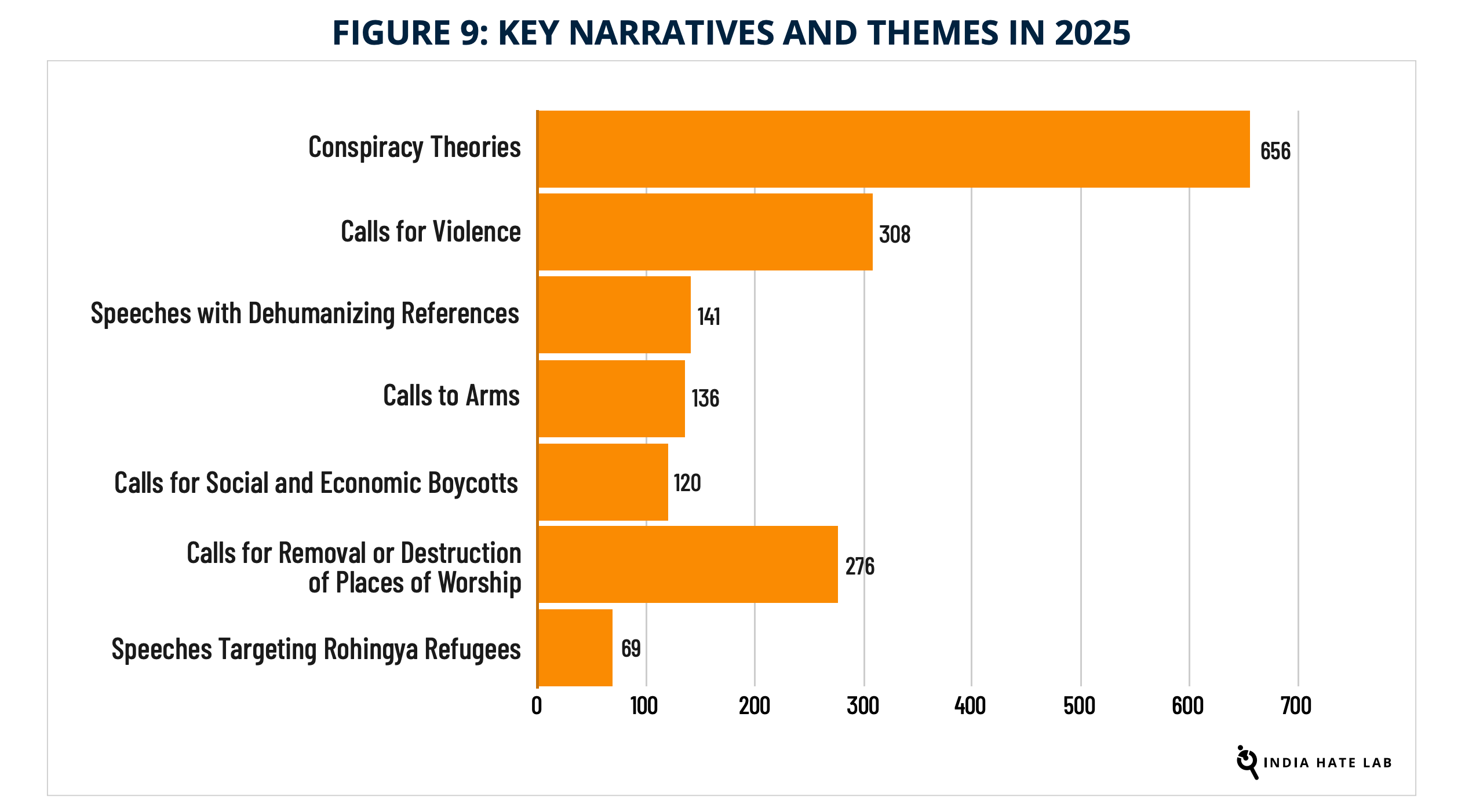

The DSA's risk categories map well onto what's documented in India, including phenomena like illegal content (explicit incitement to violence appeared in 308 speeches); threats to fundamental rights (dehumanizing language in 141 events specifically targeted Rohingya Muslim refugees residing in India, calls for the destruction of mosques and churches in 276); risks to public security (coordinated mobilization following the Pahalgam attack); and threats to electoral processes (656 speeches propagating conspiracy theories during election periods).

Source: Hate Speech Events in India, 2025, Center for the Study of Organized Hate (CSOH)

But the DSA's real innovation isn't the categories. It's the lens. By framing platform responsibility around systemic risk rather than reactive moderation, it acknowledges what researchers have long argued: that harms at scale emerge not from individual bad actors but from the interaction between organized networks and platform architectures that reward engagement over safety. A system optimized for virality will amplify outrage. A system that enables micro-targeted mobilization will be exploited by actors with the resources and coordination to do so. The question isn't whether platforms can catch every violating post. It's whether they're designed to create conditions where coordinated hate campaigns can thrive.

What oversight actually requires

Meta’s new ‘more speech, fewer mistakes’ approach doesn't just relax its hate speech and hateful-conduct rules; it also signals a retreat from treating its own systems as structurally responsible for how hateful content spreads. In India, where Meta’s third-party fact-checking program technically remains in place, that shift in how the company handles hateful content and its amplification is potentially far more consequential than the branding change to Community Notes. Crowdsourced moderation sounds democratic until you examine the evidence. On X, where the model originated, only 5.7 percent of proposed notes about election content were posted in the month before the 2024 US election. Users viewed misleading content thirteen times more often than they saw corrective notes. In polarized contexts exactly where hate speech flourishes, Community Notes requires consensus across political divides before a note goes live. The result is that politically charged content, the kind most likely to incite harm, often escapes annotation entirely.

For India, this creates a worst-case scenario. Meta's January 2025 changes are US-only for now, but the company has signaled potential international expansion. Meanwhile, the infrastructure documented in the CSOH report remains fully operational. District-level Instagram accounts for the Bajrang Dal, Facebook groups with hundreds of thousands of members sharing trophy videos of assaults on Muslims, YouTube channels livestreaming hate rallies with impunity. The platform features that enable this algorithmic amplification, cross-posting mechanics, live streaming, group recommendation systems, aren't governed by India's IT Rules because those rules don't see them.

Building meaningful oversight requires three components largely absent from current debates:

First, transparency obligations should go beyond takedown statistics. Platforms should be required to publish data on algorithmic amplification of violating content—not just how many posts were removed, but how many users they reached before removal, which recommendation systems distributed them, and whether certain categories of actors (verified accounts, high-follower pages, coordinated networks) receive differential treatment. Research access provisions, like those in DSA Article 40, would allow independent scholars to audit these claims.

Second, risk assessment frameworks should be calibrated to local context. The DSA model is a starting point, but systemic risks in India look different from those in Europe. Any framework would need to account for the role of political patronage (88 percent of documented hate events occurred in BJP-ruled states), linguistic diversity (content moderation failures are worst in regional languages), and the specific mechanics of Hindu nationalist mobilization (the use of religious processions, trishul ceremonies that are filmed, livestreamed and then pushed through Whatsapp groups and local Bajrang Dal groups and pages mapped to India's administrative layers like state, district and block level). Risk assessments conducted by platforms themselves, as the DSA requires, need external validation which means empowering regulators with technical capacity and political independence.

Third, mitigation measures that address coordination, not just content. This is the hardest piece. Platforms resist being told how to design their systems, invoking both technical complexity and speech concerns. But there's a difference between requiring removal of lawful speech and requiring platforms to stop amplifying coordinated campaigns that violate their own policies. Mitigation could include de-prioritizing content from accounts that repeatedly participate in coordinated harassment, disabling simultaneous cross-posting features for groups associated with organized hate, or adjusting recommendation algorithms to reduce distribution of content flagged—even if not removed—for policy violations. None of these measures is about banning viewpoints but about changing how harmful the content is boosted. In a country like India, that kind of targeted content moderation is both necessary and , when done transparently and under clear legal safeguards, well within the bounds of constitutional reasonable restriction on speech. They require platforms to stop designing for maximum engagement when engagement means maximum harm.

Beyond whack-a-mole

The CSOH data makes clear what's at stake. Hate speech in India isn't declining but consolidating. The same organizations appear year after year as top mobilizers. The same platforms serve as infrastructure. The same algorithmic incentives reward extremity. What's changed is the normalization: hate speech events that would have drawn scrutiny five years ago now occur at a baseline of four per day. Chief ministers deliver speeches urging violence. Cabinet minister call for economic boycotts. Influencers with verified badges and hundreds of thousands of followers post “trophy videos” of assaults. The content circulates. Platforms issue statements about their community standards. Little changes.

Meta's turn away from fact checking in the US isn't what created India's hate-speech machinery, and the status quo on its India operations was already failing Muslim, Christian and minorities users. What these changes do is strip away even the thin layer of friction that existed before, in a context where platforms were already amplifying organized hate faster than they were ever willing to check it. For years, the debate has centered on whether platforms are neutral conduits or publishers, whether they can moderate speech without bias, whether they are suppressing conservative voices or enabling hate. These are the wrong questions. The right question is whether we are prepared to regulate the systems that determine which speech gets amplified, which networks get recommended, and which content goes viral. Treating that as a systemic risk problem rather than a content takedown problem, requires seeing platforms as infrastructure, not just intermediaries.

India has the data. It has evidence. What it lacks is a regulatory framework that matches the scale of what's documented. The CSOH report should be a turning point. Whether it becomes one depends on whether policymakers are willing to look past individual posts and see the machinery behind them.

Authors