Playfully Uncovering AI's Serious Novelty

Sylvan Rackham / Jun 11, 2023Advanced AI systems exhibit behaviors that surprise even their creators. To embrace and prepare for the associated risks and opportunities, we must search out novel perspectives beyond the dominant ‘doomer’-optimist dichotomy. One approach is open-minded experimentation with AI rather than rushing to conclusions developed for technologies of the past, says Sylvan Rackham, Research Fellow at ToftH and recent University of Cambridge MPhil Technology Policy graduate.

AI is receiving a lot of hype right now, with many opinions about potential applications, risks and viability.

There is often a bubble-like quality to these opinions with some being repetitive, inflammatory or (perhaps purposefully) misinterpreting features of AI's development – some manage to do all three. This occurs on both sides of the debate and seems to draw discourse towards a cocktail of the loudest pundits’ most shocking dystopian or utopian sci-fi analogies and away from what I believe is a more stable, considered and inclusive development of AI.

A lot can be learned from reading the words of loud pundits, not least that they give perspectives of the opposing camp that neither can articulate about themselves. There is one critique of ‘doomers’ from venture capitalist Marc Andreesen’s latest post which I think is actually more usefully applied to both sides – many of the extreme scenarios we hear are simply opinions from people who don’t have any “secret knowledge.”

AI can sound (and sometimes feel) like magic, only understandable by what clickbait headlines call “geniuses”, “boffins” or more calmly, “experts”. The full technical details of AI are undeniably esoteric but the concerns and excitements are not – we all can, and should, imagine what it may mean to have AI which enables new experiences, or what it may be like to be automated out of a job. Fortunately, a lot of the AI being discussed, unlike most previous cutting-edge technological developments, is largely open, free and easy to be tested by anyone. So, through serious playing, I hope we can define the conversation and development of AI.

This is not intended as an AI apology or blind optimism. Rather, I seek to refine, combine and communicate the exposure I have been fortunate to have with many of the leading voices in AI's design, application and governance over the past months of my fellowship at ToftH, a nonprofit educational organization that considers the relationship between philosophy and technology. I hope this post will complement your existing AI knowledge (of any level) with three suggestions for how to engage with this often amorphous and complicated topic productively.

To specify what I mean by AI in this post, I use examples of generative AI, and large language models (LLMs) in particular, since this is behind the recent, popular and accessible AI applications, like ChatGPT, DALL-E, Bard, Midjourney and Stable Diffusion (plus a few more I will mention in the final section). However, the suggestions apply to deep learning approaches more broadly.

Who is this for?

This post is for anyone who wants a framework with which to assess the developments of AI and know when they’re getting dragged into other (likely very interesting) tangential discussions. More specifically, this is for (nearly) anyone who:

- Wants to have novel experiences using AI.

- Makes products by applying existing AI models - to highlight how to escape obvious approaches.

- Invests in AI - to help identify what currently unnoticed futures will be afforded.

- Makes policy - to get a clearer view of AI and its novel implications (both its risks and potential) rather than seeing it as a continuation of existing technologies.

Three ways to notice novelty, develop a more productive approach and avoid common conversational treadmills

In an attempt to make AI easier to understand and discuss, I have tried to identify areas of common ground between the opposing sides of the AI debate. I’ve distilled these findings into three suggestions to provide a means for you to identify common tangents, avoid getting dragged into them, and notice the unexpected elements of AI.

1. Recognizing the importance of, but not reducing, AI to power dynamics.

Perhaps the most popular framing of AI is just another means to consolidate or distribute power, whether that be the enormous compute requirements, access to training data, geopolitics, and potential effects on labor. These are worthy conversations to be had, but the issues discussed are almost never novel to AI and can be found in writing over the past ~200 years.

One of the most prevalent discussions is the social effects of automation, which to me, sounds like forms of critiques found in places like Benjamin's ‘The Work of Art in the Age of Mechanical Reproduction’, Marx's ‘Fragment on Machines’, Engel’s ‘On Authority’ (which takes the exact opposite view to Marx) and Capek's ‘R.U.R.’ to name just a few. A very reductive thematic summary across all of these is: there are tasks which humans like/should be doing and automation will make this more/less accessible. As just one example of this, how similar does this quote from Capek’s R.U.R., released over 100 years ago, sound to today’s automation optimists?

…within the next ten years [Robots] will produce so much wheat, so much cloth, so much everything that things will no longer have any value. Everyone will be able to take as much as he needs. There’ll be no more poverty. Yes, people will be out of work, but by then there’ll be no work left to be done. Everything will be done by living machines. People will do only what they enjoy. They will live only to perfect themselves.

These authors provide a backdrop and conceptual history for what we are experiencing today with AI. To focus only on such arguments, however, ultimately grounds the conversation in outdated concepts and expands it so much we miss the specificity that is crucial to understanding. These are part of a broader conversation about who/what we trust to take on activities that have historically been considered exclusively for humans and what the social implications may be.

To contradict myself a little, here is a small tangent if you do want to discuss AI as relating to power.

Automation as its own topic does matter, so I would recommend two books from the past few years. Daniel Susskind's A World Without Work provides a modern take on this issue, including the potential and risks of Universal Basic Income. Azeem Azhar’s Exponential covers a broader range of topics but has a good point on successful organizations growing their headcount with automation, while ‘bad’ ones just follow trends without finding new roles for staff.

Also, given AI does have implications for power dynamics and politics, how can we know when it is suitable to view it in these terms? Langdon Winner’s article “Do Artifacts Have Politics?” is a useful guide when assessing any technology in this way. James Plunkett refines this down to four means to review any technology’s political implications: its use, its default beneficiaries, its compatibility with political systems, and the required socioeconomic conditions for it to thrive.

Ok, let’s get back to the point: AI can be used to gain and consolidate power, and many people (including me) want to and should work on the implications. However, we must acknowledge this and then look beyond to find what novel applications and experiences are possible, and what sort of new institutions we need to take advantage of and provide protection from them.

2. Questioning assumptions about 'human characteristics' and decoupling them from AI’s assessment.

So, in an attempt to avoid trying to answer the question "what does it mean to be human?" I will assume we can agree that there is no universal answer. If this is the case, you'll likely see that the effort to compare AI to humans as a collective quickly seems to lose its meaning, as there is no meaningful center or ‘average human’ with which to make a comparison.

Do all humans think in the same way? Can all humans see? Can all humans walk? The list goes on and to me (and many others), that is a great thing – making space and exceptions for differing modes of interacting with the world not only increases inclusivity but also provides opportunities for novel and potentially beneficial perspectives to flourish. Just two examples of contemporary authors writing about this broader topic are Caroline Criado Perez and Matthew Syed.

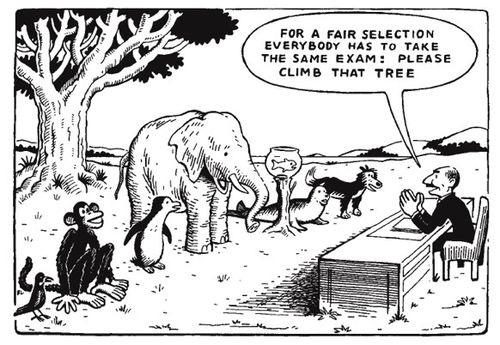

Implicitly however, we still seem to want to develop towards our own type of intelligence. Many tests (including the Turing, Coffee, Robot College Student, Employment and Flatpack Furniture tests) and various lists of assessment criteria for artificial general intelligence (AGI) are based on supposedly universal ideas of human intelligence. Differences in intelligence is not a novel point to raise, and in the past, I have seen it applied to the differing learning capabilities of children – famously depicted in a metaphorical cartoon assessing animals' ability by asking them to climb a tree.

We are seeing a disjunction of things we previously thought had to be grouped together for intelligence and so must avoid reliance on outdated human-centric measures (e.g. Is it conscious? Can it pass the Turing test? Does it understand the meaning of its own output?).

ChatGPT, Google's Bard or Bing AI demonstrate extraordinary speed and abilities to replicate writing styles but fail at showing a conventional understanding of the topics or how they obtained the ability to respond (for more on this, check out this blog post from Rohit Krishnan). This may not be a lasting quality of these large language models (LLMs), with products like Perplexity seemingly able to provide the verifiable context we desire. However, by fitting LLM chatbots into our current (perhaps questionable) expectation that when we ask a computer a question it must give us ‘truthful,’ reliable information, we miss the opportunity to experiment and find new ways to interact with this novel technology beyond the paradigms of typical software and human interaction.

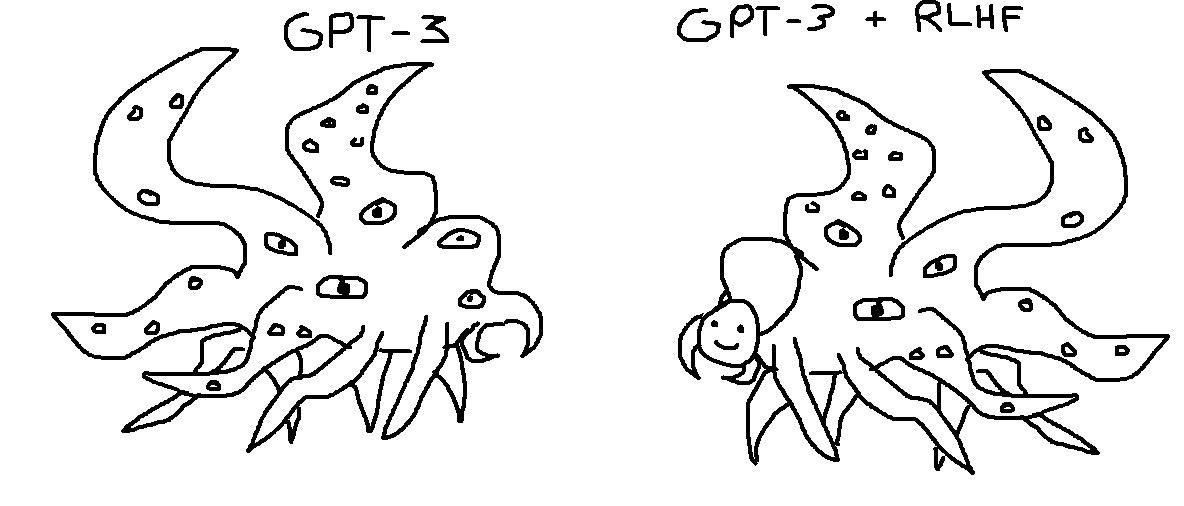

How we interact with LLMs is a hot topic with varying opinions on whether there should be an option to use basic models which haven’t gone through the cultural sensitivity and conversational training which makes ChatGPT and Bard so easy to interact with. On one hand, there are claims that the ‘wokeness’ in this training limits the model's capabilities, while on the other there is an understandable desire to reduce harmful generated content. One cartoon shared on social media shows GPT-3 (the model behind the initial release of ChatGPT) as a "weird alien" with a happy mask as a metaphor for the human-friendly interface included in ChatGPT developed through Reinforcement Learning from Human Feedback (RLHF).

Whilst I like the playfulness of this depiction, I think it is misleading about our view of and relationship with AI. I believe the fear and perceived danger of AI is not in its 'alien' form or current function, but in the expectations we have of where we are willing to apply it. We fear reckless implementation in the name of the human implementers' desires.

This sounds a little bit like reducing AI to power, doesn't it? I'll let you explore that topic with the links already provided and focus more on avoiding using humans as a benchmark for AI. What the alien cartoon does show us is a novel type of intelligence/being/thing which we can investigate and over time perhaps understand its novel applications to existing problems, behaviors, and experiences we did not anticipate.

3. Appreciating how different the development of AI is compared to other technologies.

The final approach I'll mention is perhaps the simplest and one I have heard several times from those developing AI. Deep learning in particular is notably different from previous technologies. I don't mean that in a clickbait-headline type way, but as a means to distinguish it from the history of engineering as a skill to build machines which do not deviate from their intended design. The ‘mistakes’, ‘hallucinations’, and current impossibility to fully understand why a system came to a particular conclusion highlights this novelty.

One suggestion is that we can think about AI more like a biological system (but, without extending this analogy) where we discover features, rather than in an engineering design, such as for a bridge, where one must know the outcome and function before building it.

For some, this can lead to some pretty weird questions, such as are we discovering a life of its own when developing these systems? But the real point of this biological framing is to move away from a desire to optimize for output and accept what may seem like mistakes as essential (but not always relevant) representations of how a model is making sense of input data and any problem it faces. For example, two models may produce the same output, but the way they get there can be completely different, and the models themselves provide no means of knowing which will perform better at seemingly related tasks.

Appreciating the difference between AI and existing technologies seems particularly important when discussing AI policy (for both its stimulation and the mitigation of risks). Insights from existing technologies will miss novel risks and opportunities by focusing on those known to us.

We are faced with big questions, such as what data can or should LLMs be trained on? What are the copyright laws for images or text outputs? What are ‘facts’ and when do we need them? None of these can be answered effectively by treating AI purely as a product of traditional engineering design since for now, LLMs have their own unpredictable characteristics. Searching for existing regulations in domains beyond those for technology seems like a good place to start to find appropriate solutions. In asking questions about how copyright works when animals are involved in a creative process or where we alter our expectations about humans providing truthful information, we may uncover suitable ways to regulate our new companions and perhaps update our understanding of ourselves and the world around us whilst we’re at it.

Practical ways to experience this novelty

I hope and expect that using the three suggested approaches will open up some new ways to understand, use and develop AI. To me, it has become clear that generative AI applications already hold the power to enable experiences beyond existing technology. Removing the expectation that we will be given perfect finished products and appreciating instead the inspiration and collaboration is, in my perspective, perhaps the most liberating and exciting shift. To give an idea of what I mean, here are some examples of popular applications I've been using (beyond ChatGPT and DALL-E) that take 2-5 minutes (and here’s one much longer list of generative AI applications if you want more):

Generating dreamlike videos based on text prompts with Deforum.

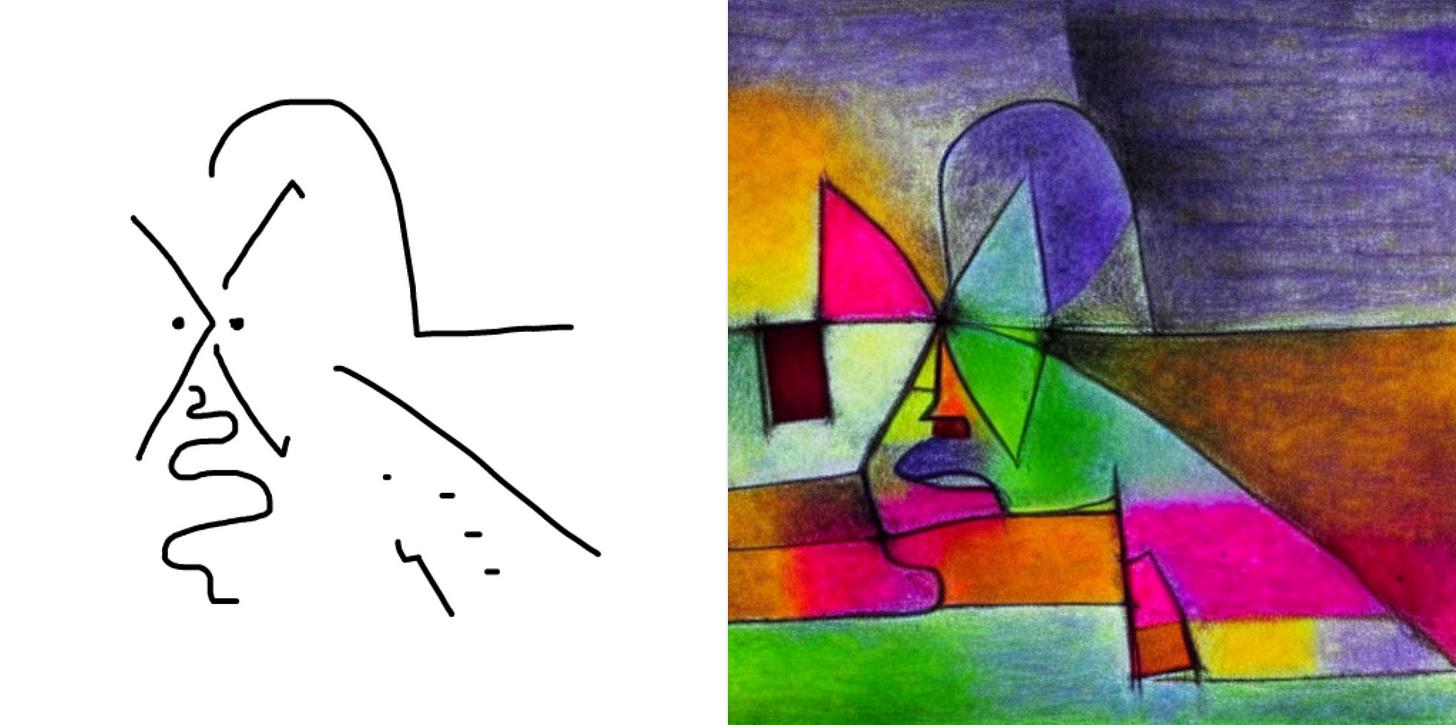

Turning sketches into more detailed and stylized images using text prompts with Scribble Diffusion.

Mutating or combining existing photos to create new images with Midjourney or videos with Genmo.

Asking questions of Perplexity and being given a summary answer with several links for further reading provides a springboard for initiating research (beyond just clicking all the links on a Wikipedia page).

Over to you

I wish I could point at exactly the type of novelty I hint at throughout this piece, and I am currently trying to build experiences towards this goal – watch this space. I would love to continue this conversation and get your perspectives, and I invite the reader to connect. But for now, please consider this post as a rallying call to take a slightly more curious and playful approach to developing what may be one of the most serious and impactful technological developments in human history.

Authors