Reconciling Agile Development With AI Safety

Steven A. Kelts, Chinmayi Sharma / Dec 6, 2023For AI safety frameworks to have their intended effect, the tension between their goals and the realities of development practices in industry, such as Agile life cycles, must be reconciled, say Steven Kelts and Chinmayi Sharma.

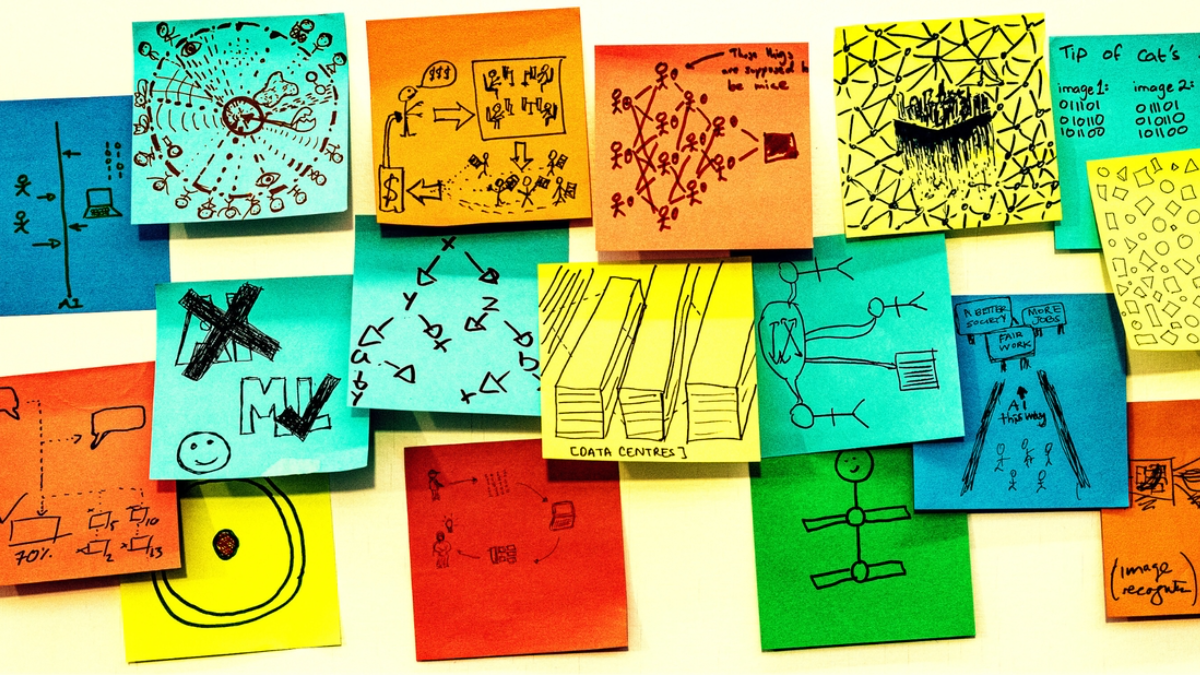

Rick Payne and team / Better Images of AI / Ai is... Banner / CC-BY 4.0

In the past few months, governments across the world have released a roll-call of frameworks to achieve the responsible development of artificial intelligence. While the proposals are laudable, the devil will be in the details of implementation. Ensuring responsible development will require processes that the software industry can deploy on a day-to-day and ongoing basis, since implementing safe AI will likely be a project for generations to come.

But where the various government statements fall short is precisely on the question of process: they don’t stipulate how their principles are to be implemented in the fast-paced, Agile development ecosystem employed by most tech companies today.

The AI arms race aside, speed and efficiency have long been the twin pillars guiding Agile development practices (a set of practices we explain and critique below). Where responsible AI frameworks, such as the National Institute of Science and Technology’s (NIST) AI Risk Management Framework (RMF), seek to induce considered, deliberate action and the employment of a precautionary principle, Agile is specifically designed to maximize productivity in breakneck “sprints.” Unless the edicts of responsible AI guidelines are made compatible with AI development practices on the ground and the breakneck pace of industry, all the government guidelines in the world will be little more than paper tigers.

Government Responsible AI Frameworks

Last month, the Biden Administration issued an executive order on “Safe, Secure, and Trustworthy Artificial Intelligence (AI),” setting the tone for the executive branch’s intent to engagement in this space, both in terms of the federal government’s use of these technologies and its influence over the private sector’s development of them. In the EO, the White House endorsed the practices enshrined in the White House’s Blueprint for an AI Bill of Rights, released last year shortly before the launch of OpenAI’s ChatGPT. And it endorsed part of the NIST RMF (though as we will argue in the next section, not enough). The Bill of Rights and NIST Framework embody the principles of continuous, measurable, accountable, and well-documented evaluations of AI system effectiveness and risks. They include, among other things:

- Developing systems in consultation with diverse communities and domain experts to identify and mitigate risks;

- Mapping, or documenting, systems components, data flows, and interactions with other systems and environments;

- Subjecting systems to pre-deployment testing, risk identification, mitigation, and continuous monitoring to ensure safety and effectiveness using concrete, measurable, well-defined standards and requirements;

- Minimizing system discrimination through proactive equity assessments, using representative data, protecting against demographic proxies, ensuring accessibility for people with disabilities, conducting pre-deployment and ongoing disparity testing and mitigation, and establishing clear organizational oversight;

- Building in privacy protections, including data minimization, meaningful user notice and explanation, and user controls over their data, and opt-out capabilities;

- Designing risk-management controls and contingency plans;

- Conducting and making public independent evaluations of systems and compliance with the aforementioned principles when possible.

Similarly, this past week, eighteen countries signed an agreement that says AI must be secure by design in order to be safe. The first of their kind, the Guidelines for Secure AI System Development were written collaboratively by various government, security, and intelligence entities and the private sector, in an effort led by the UKs National Cyber Security Centre and the US Cybersecurity and Infrastructure Security Agency. These guidelines are more technical and operational than the AI Blueprint for an AI Bill of Rights, which focuses primarily on ethical considerations, or the NIST RMF, which focuses on system safety and effectiveness generally, but they still echo the same principles and practices espoused by both—security-minded development at every stage of the software lifecycle.

To be clear, neither of these frameworks impose any concrete requirements on AI companies today—the Secure AI guidelines are purely voluntary and even the legally-binding White House EO is limited in scope. The EO was groundbreaking in that it uses the Defense Production Act to require private companies to report ongoing or intended training or production of potential dual-use foundation models, the NIST-recommended red-teaming efforts undertaken to ensure their safety and security, and the cybersecurity practices adopted to protect model weights.

However, one problem is that these requirements only apply to models trained by a company using a quantity of computing power greater than 1026 integer or floating-point operations. No publicly available model today meets that criterion. The next generation of models may, but this threshold still leaves three major gaps:

(1) it does not require companies productizing these foundation models to report anything related to their fine-tuning, red-teaming, or cybersecurity measures over weights;

(2) it does not cover open source models and weights not developed by companies; and

(3) it does not account for the fact that as the technology progresses, we may see more sophisticated models capable of functioning with less compute power—in other words, many next generation models may be more powerful but may not meet the EO’s threshold requirements.

It is possible that the EO may have trickle-down effects when agencies governing specific critical infrastructure sectors formally adopt NIST RMF practices into their safety and security guidelines, implicitly forcing federal contractors serving them to comply regardless of model compute power. But this still leaves a gap: what of all the private sector companies building powerful, but not the most powerful, AI products for the public?

This is not criticism per se; the Biden administration could only do so much, legally speaking, with an Executive Order. But these gaps foretell the need for more direct requirements that industry adopt safety-minded practices. Given the international momentum around responsible AI regulatory interventions, we have every reason to think more direct mandates are on the way.

But will the software world be ready for them when they come?

That requires taking a good, hard look at how software is currently developed, and asking whether today’s industry practices are up to the task of responsible design. We think they are not… yet. But if further regulation mandated that a wider swath of the industry employ something like the NIST RMF more fully, we’d make more progress on the path to safe, secure and responsible AI development, productization and use.

The Implementation of Responsible AI Frameworks in an Agile World

When exploring legal mandates, policymakers must consider two things:

(1) how to help the most responsible actors comply with these mandates in a way that achieves the stated safety, security, and ethical goals; and

(2) how to prevent the least responsible actors from “gaming” the mandates, doing the least possible to comply with them in an attempt to subvert stated safety, security, and ethical goals.

The AI EO and other responsible AI frameworks are focused on influencing engineer behavior and so, to design successful mandates, policymakers must take into account the engineering realities on the ground. Today’s engineers are Agile—can responsible AI be Agile as well?

Agile development is a reaction to, and is regarded as an improvement on, traditional methods of software development. Before Agile, software development began with a detailed blueprint for a final product and broke the design process down into successive steps to be taken by different specialized teams working in silos. Each step could be taken only when the prior team was mostly done with its work, having documented its decisions. The process was like a stream cascading down a hillside level-by-level (hence the general name for these methods, “Waterfall”). But this approach wasn’t flexible enough to respond to unexpected problems or changes in customer demands; any alterations required downstream would require a shift in the blueprint, and restarting at least some of the project uphill. Think of a boss giving you a six month project but refusing to look at it until you’re done: they could have prevented you from going down a rabbit hole in month two, but instead, you’re canceling your upcoming vacation and redoing all the work.

Agile methods were meant to avoid this time-sucking process of readjustment. Agile teams iterate on development starting with a “minimum viable product” the customer can test, adding or subtracting features as the customer vision develops. Teams are generally made up of experts in developing different product features (user interface, data retrieval, or whatever), so that the different steps in the cascade can be co-developed all at the same time. In the bigger companies with many experts, teams might even self-organize based on product needs, bringing on new contributors as the product vision evolves. And since development is iterative and incremental, goals are set for very short (often one week) “sprints” in which a new and better version of the product is made. The work of software engineers is in fact broken down even further into discrete tasks, with each developer responsible to report on their progress daily in “stand-up” meetings (with everyone that is able literally on their feet, so the meeting is shorter). In an Agile world, your boss, as well as other subject-matter experts, would have been forced to review your work at regular intervals, avoiding the cancellation of your desperately-needed vacation.

On one hand, the Agile principles of an iterative approach, regular risk management checks, cross-expertise collaboration, solicitation of third-party feedback at every stage, and adaptability to changing priorities or new findings seem well-suited to the seamless incorporation of responsible AI practices.

However, we think responsible AI development will require a full revamp of the software development lifecycle, from pre-training assessments of data to post-deployment monitoring for performance and safety. Some practices, such as automated algorithmic checks (like tests for data bias and model performance metrics, all of which are part of Stanford’s HELM set of evaluations) can be utilized anywhere in the development lifecycle. Other techniques may be purely ex post, like algorithmic audits. An Agile approach avoids engineering siloes, allowing for stage-specific practices to be adopted where necessary, while ensuring stage-agnostic practices are adopted at all relevant stages, and allowing these stages to proceed in tandem. That’s good (and better than Waterfall, where problems wouldn’t be unearthed until the stage where a responsible AI check is done).

But on the downside, the Agile software development process used in most tech companies seems tailor-made to compartmentalize information, diffuse responsibility, and create purely instrumental performance metrics (KPI’s, OKR’s, etc.).

Algorithmic impact assessments (if built on a model more robust than GDPR’s Personal Data Impact Assessments) can govern developers across the lifecycle, and importantly can engage diverse stakeholders to incorporate the perspectives of those affected by a model. But decades of research on cognitive biases in corporate settings suggest that even the best-intentioned employees can be willfully blind to perspectives that complicate their own and to wrongs done through third parties. Additionally, when the lifecycle is comprised of overlapping teams with overlapping work at all stages, responsibility is even more diffuse.

And Agile generally prioritizes measurable efficiency above all else. Companies that overvalue goal achievement can find themselves in hot water if their goals are ill-conceived or their employees cut corners to get promised rewards. This could unintentionally incentivize “teaching to the test” (overfitting a model to pass automated checks) and making ethics a mere “checklist” (as some worry about audits). If responsible AI frameworks are adopted through rote box-checking frameworks, compliance will be a race to the bottom in terms of quality.

For instance, “red-teaming,” a practice endorsed by most responsible AI frameworks (and the White House EO), is the ex post practice of “crash-testing” a system to see where it is weak and where it breaks. Because it is adversarial in nature, it will expose the brittleness of overfit models and expose teams who went through the motions, merely checking boxes. However, overvaluing goal achievement can mean that even developers made aware of the potential wrongful impact of their work via red-teaming may not act to remedy the situation if their incentives don’t align and they aren’t conscious that the responsibility falls squarely on their shoulders.

Without some serious alterations, Agile is a recipe for algorithmic disaster. And none of the usual remediation techniques can fix it. Impact assessments can only be carried out if the final use case for a model is known; in Agile development, it’s always evolving. Automated data checks require delay before training can begin; Agile is meant to bypass all delays. Preparing for an eventual audit means careful documentation of decisions; Agile is allergic to documentation. Red-teaming can uncover elements of a product that are vulnerable; but the Agile process encourages only a quick fix, not a fundamental rethink.

Even more significantly, Agile is not an easy fit with the sorts of informational requirements and decision-making necessary for ethical action. Agile practitioners can fall right into “blindness” traps, since information that might trigger their moral concerns is kept siloed. No central plan for a product is developed for consideration of all, and choices made by one team member create a dangerous alchemy with those made by others if no one is sharing documentation of their decisions. What happens with one developer stays with that developer, exacerbating their existing tendency to see only what they want to see and shuffle off responsibility onto others. Incentives like pay and promotion are connected tightly with granular goals, and speed in accomplishing those goals means everything. So no one may even take it as their goal to achieve a broader ethical vision for the product.

Agile’s dominance in the software industry is unsurprising. Software generally, and AI specifically, are driven by market speed. Google sat on Bard for years until OpenAI released ChatGPT—within months, not only did Google release Bard, but every other company offering software products or services began advertising AI features as well. The technology industry has a large number of big players that are not profitable—it’s an industry propped up by venture funding. Funding often goes to first movers. And there’s also consumer demand: once OpenAI whet our appetite, we can’t seem to get enough of AI. The collective effect of these factors has made the speed of AI development more important (to industry) and more dangerous (to us) than ever.

The least responsible actors in industry are likely to exploit this tension, paying lip service to responsible AI frameworks, while minimizing the desired delay and caution these frameworks seek to impose. If some in industry beguile regulators through performative adoption of practices without honoring these frameworks’ animating principles and goals, then the most responsible actors in the industry are left disadvantaged if they choose to diligently apply these frameworks.

Introducing Responsible Agile AI

We can’t get rid of Agile (nor should we), because there are real benefits to be had and because industry can’t do without. We also cannot surrender our efforts to impose responsible AI frameworks on a rapidly advancing, existentially concerning technology that will touch the lives of everyone, whether they use it or not, one way or another.

So what would we recommend? How do we move to a world of responsible Agile AI? At the core of this effort must be capacity building and information sharing—two challenges that many aspects of technology regulation face, from cybersecurity to robotics. To reconcile Agile life cycles with responsible AI frameworks, we need information regarding how to audit potential training data, how to test for bias, how to consider public safety impacts, and how to red-team for these risks at various stages of the life cycle. And, we need the people who have a thorough understanding of this information and know how to operationalize it to be a part of the Agile teams producing this unprecedented technology.

First, we need to train the responsible-development experts that can be embedded in engineering teams, so they become a part of the Agile lifecycle. They must be technically proficient as well as deep experts in security, safety, privacy, and ethical issues. While each employee should be trained to think about broader product impacts at all times (and protected by whistleblower procedures within the company if they see something amiss), there should be designated “ethics owners” on each team whose responsibility is to assess the bigger picture. It is a tall order, but not an anomalous one. The medical industry has long had to reckon with the cybersecurity risks of its devices and networks, the privacy of sensitive healthcare information, the safety of patient care processes, and the ethics of public health. In fact, the American Medical Association has already voted on and released principles for responsible medical uses of AI.

Second, reconciling Agile with responsible AI development will enable robust information sharing across the ecosystem. By this we do not mean the voluntary public-private partnerships that have proven largely ineffective in the critical infrastructure context. We mean mandatory information sharing between industry and the government, an effort set in motion by the reporting requirements laid out in the AI EO for developers of the most powerful models, as well as mandatory information among members of industry. Intellectual property and trade secret concerns cannot be used as pretext for withholding important information uncovered by some members of industry related to ensuring the security, safety, ethics, and effectiveness of AI systems. This information must be seen as a matter of public interest. Effective treatment of maladies cannot be withheld by public hospitals or private practices as a competitive advantage—it is information critical to ensuring public welfare and so belongs in the public domain.

This will necessarily require a partial return to the documentation culture of previous eras and the introduction of thoughtful delay in the software development lifecycle. Documentation allows for the generation of the area knowledge at the center of capacity building and information sharing. The AI EO and the Guidelines for Secure AI System Development recognize this implicitly in their reporting requirements—companies cannot share the risks in their training data or the precautions taken to ensure the safety and security of systems without first documenting that information. To be sure, thorough documentation creates delay—it’s why developers chafe at the idea. But, the stakes are too high not to force these revisions. Reconciliation entails compromise.

Authors