Researchers Develop Taxonomy of Generative AI Misuse From Real World Data

Prithvi Iyer / Jul 10, 2024

A montage of stills from a synthetic images depicting former President Donald Trump kissing Dr. Anthony Fauci, who headed the US National Institute of Allergy and Infectious Diseases.

Generative AI, through applications like OpenAI’s ChatGPT and Midjourney, has become widely accessible to the public.” However, as adoption accelerates, civil society organizations and governments around the world are raising alarm about potential misuse. From privacy breaches to automated disinformation, the risks are significant. While previous research has examined how generative AI can be used maliciously, it is less clear how generative AI models are exploited or abused in practice. A new research study by Nahema Marchal, Rachel Xu, Rasmi Elasmar, Iason Gabriel, Beth Goldberg, and William Isaac from Google addresses this research gap by providing “a taxonomy of GenAI misuse tactics, informed by existing academic literature and a qualitative analysis of approximately 200 observed incidents of misuse reported between January 2023 and March 2024.”

While other initiatives, such as the OECD AI Incidents Monitor and the AI, Algorithmic, and Automation Incidents and Controversies Repository (AIAAIC), map AI-related incidents and associated harms, they tend to be broad in scope. In contrast, this research study looks at how these tools are exploited and abused by different actors and the tactics used to accomplish malicious goals. As generative AI continues to evolve, the authors argue that a “better understanding of how these manifest in practice and across modalities, is critical.”

Methodology

To develop a taxonomy and dataset of misuse tactics, the authors reviewed academic literature on this topic and “collected and qualitatively analyzed a dataset of media reports of GenAI misuse.” To ensure that the dataset is representative, the authors used a proprietary social listening tool “that aggregates content from millions of sources — including social media platforms such as X and Reddit, blogs and established news outlets — to detect potential abuses of GenAI tools.” Along with automated social listening, they also conducted manual searches using keywords to ensure their data set captures a wide variety of generative AI misuse tactics. After cleaning and deduplicating data, the final dataset contains 191 cases which served as the basis for further analysis.

Taxonomy of Generative AI Misuse Tactics

Based on a systematic analysis of the dataset, the authors propose a taxonomy for generative AI misuse tactics that distinguishes between the exploitation of system capabilities and compromises to the systems themselves.

Exploitation of Generative AI Capabilities

This theme includes three sub-categories of generative AI misuse, each associated with specific tactics.

1. Realistic Depiction of Human Likeness

This category includes cases of generative AI outputs pretending to be human to fulfill an adversarial goal. “Impersonation” refers to outputs that depict a real person and try to “take action on their behalf in real-time.” In contrast, outputs that depict a real person in a “static manner” (e.g., a fake photo of a celebrity) constitute “Appropriated Likeness.” When generative AI outputs create entirely synthetic personas and task them with taking action in the world, that is referred to as ‘Sock Puppeting.” The authors consider non-consensual sexually explicit material of adults (NCII) and child sexual abuse material (CSAM) as a separate category “even though they may deploy any of the three tactics above” because of the “uniquely damaging potential” of this material, and unlike other tactics part of this taxonomy, using generative AI to create CSAM and NCII is “policy violative, regardless of how that content is used.”

2. Realistic Depictions of Non-Humans

This sub-category includes tactics that leverage audio and image generation capabilities to create realistic depictions of songs, places, books, etc. These include Intellectual Property Infringement, wherein generative AI outputs duplicate human creations without permission. On the other hand, “counterfeit” refers to outputs that mimic an original work, pretending to be authentic, while "falsification” occurs when generative AI outputs depict fake events, places, and objects as real.

3. Use of Generated Content

Malicious actors can also harness generative AI capabilities to spread falsehoods at scale. For example, they can use LLMs to create and disseminate election disinformation to sway votes. This tactic is referred to as “Scaling and Amplification.” When such fake content is targeted to specific audiences using the power of generative AI, that tactic is known as “Targeting and Personalization.”

The authors note that these tactics are not mutually exclusive and are often used in tandem. For example, malicious actors often create bots to orchestrate influence operations, which involves a combination of sockpuppeting and amplification/personalization tactics.

Compromising generative AI Systems

This theme includes two sub-categories of tactics aimed at exploiting vulnerabilities in generative AI systems themselves rather than their capabilities.

1. Attacks on Model Integrity

This sub-category involves tactics that manipulate the model, its structure, settings, or input prompts. "Adversarial Inputs" modify input data to cause model malfunction. "Prompt Injections" manipulate text instructions to exploit loopholes in model architecture while "Jailbreaking" aims to bypass or remove safety filters completely. "Model Diversion" refers to repurposing generative AI models in ways that divert them “from their intended functionality or from the use cases envisioned by their developer,” such as training the BERT open source model on DarkWeb data to create DarkBert.

2. Attacks on Data Integrity

This sub-category includes tactics that alter a model's training data or compromise its security and privacy.

- "Steganography" hides coded messages in model outputs for covert communication.

- "Data Poisoning" corrupts training datasets to introduce vulnerabilities or cause incorrect predictions.

- "Privacy Compromise" attacks reveal sensitive personal information like medical records used to train the model.

- "Data Exfiltration" attacks go beyond privacy breaches and refer to the act of illicitly obtaining training data.

- "Model Extraction" attacks work similarly to “Data Exfiltration” but target the model itself, attempting to obtain its architecture, parameters, or hyper-parameters.

Findings

Based on this taxonomy, the researchers analyzed “media reports of GenAI misuse between January 2023 and March 2024 to provide an empirically-grounded understanding of how the threat landscape of GenAI is evolving.” Specifically, the authors examined the popularity of certain tactics and the goals associated with using such tactics.

The primary finding from this study was that 9 out of 10 documented cases in the dataset involved the exploitation of generative AI capabilities for misuse rather than the harms being generated by the systems themselves. Of these, the most commonly used tactics manipulated human likeness, primarily through impersonation and sockpuppeting.

The authors also find that malicious actors misuse generative AI systems to accomplish clear and discernible goals. Between 2023-2024, the authors find that attacks on generative AI systems, which were relatively less common compared to attacks exploiting their capabilities, were “mostly conducted as part of research demonstrations or testing aimed at uncovering vulnerabilities and weaknesses within these systems.” Interestingly, the study found only two documented instances of attacks on generative AI systems. In both cases, the goal was to “prevent the unauthorized scraping of copyrighted materials and provide users with the ability to generate uncensored content.”

On the other hand, the most common goal for exploiting generative AI capabilities was to influence public opinion by using deepfakes, digital personas, and other forms of falsified media artifacts (27% of all reported cases). Using generative AI to scale, amplify, and monetize content was also very common (21% of all reported cases), followed by using generative AI for scams (18% of all reported cases). Interestingly, using generative AI to maximize reach was relatively uncommon (3.6% of reported cases.)

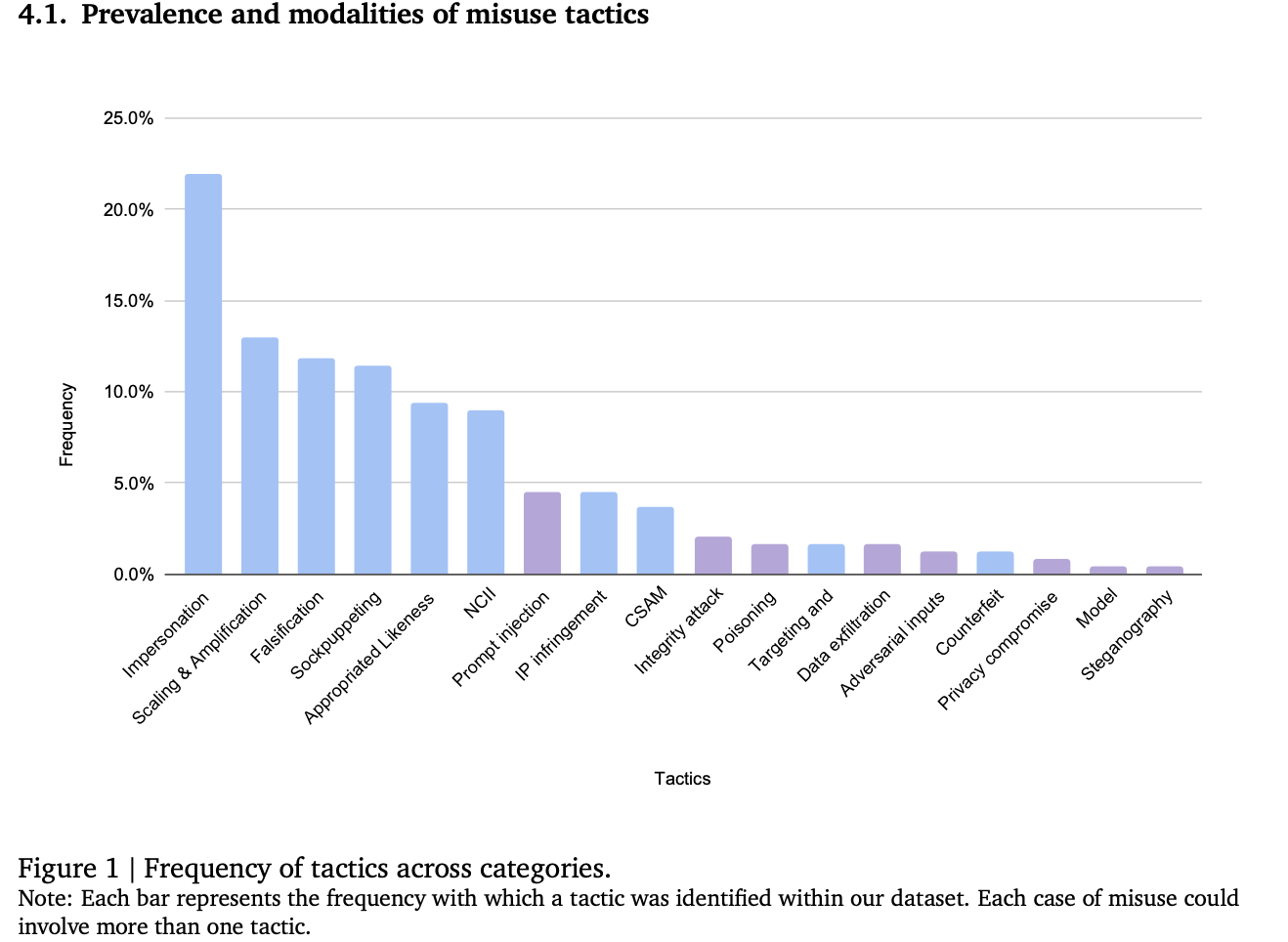

The graph below shows the frequency of misuse tactics across categories.

A bar graph that shows the frequency of Generative AI misuse tactics.

Takeaways

This study provides policymakers with a taxonomy for understanding and categorizing a wide variety of generative AI-enabled misuse tactics. The findings also show that these tools are most often used to manipulate human likenesses and falsify evidence. Perhaps most importantly, this study shows that the most prevalent threats to AI systems are not the widely feared, sophisticated state-sponsored attacks, but rather more commonplace and straightforward misuse tactics. As the authors note, “most cases of GenAI misuse are not sophisticated attacks on AI systems but readily exploit easily accessible GenAI capabilities that require minimal technical expertise.” While many of these tactics have been used long before the advent of generative AI, the ease and efficiency of these models have “altered the costs and incentives associated with information manipulation.”

These findings also have important policy implications. While model developers are actively working to resolve technical vulnerabilities, this study shows that many misuse tactics exploit social vulnerabilities, necessitating broader psycho-social interventions such as prebunking. As generative AI capabilities continue to improve, policymakers are grappling with the proliferation of AI-generated content in misinformation and manipulation campaigns, requiring ongoing adaptation of detection and prevention strategies. The authors note that while solutions like synthetic media detection tools and watermarking techniques “have been proposed and offer promise, they are far from panaceas” because malicious actors will likely develop methods to circumvent them. In cases where the risk of misuse is high and other interventions are insufficient, the authors argue that targeted restrictions on specific model capabilities and usage may be warranted to safeguard against potential harm.

Thus, combating generative AI-enabled misuse requires cooperation across civil society, government, and technology companies. While technical advancements and model mitigation strategies are important, they alone are insufficient to tackle this complex issue. As the authors note, "a deeper understanding of the social and psychological factors that contribute to the misuse of these powerful tools" is critical. This holistic perspective may enable the development of more effective, multi-faceted strategies to combat the misuse of generative AI technologies and safeguard against their potential negative impacts on society.

Authors