US Leaders Must Advance Big Ideas To Protect Our Digital Civil Rights

Nora Benavidez / Jan 31, 2024The United States Congress and the White House have an opportunity to broaden the privacy reforms we imagine possible, to champion privacy and civil liberties protections for all, says Nora Benavidez.

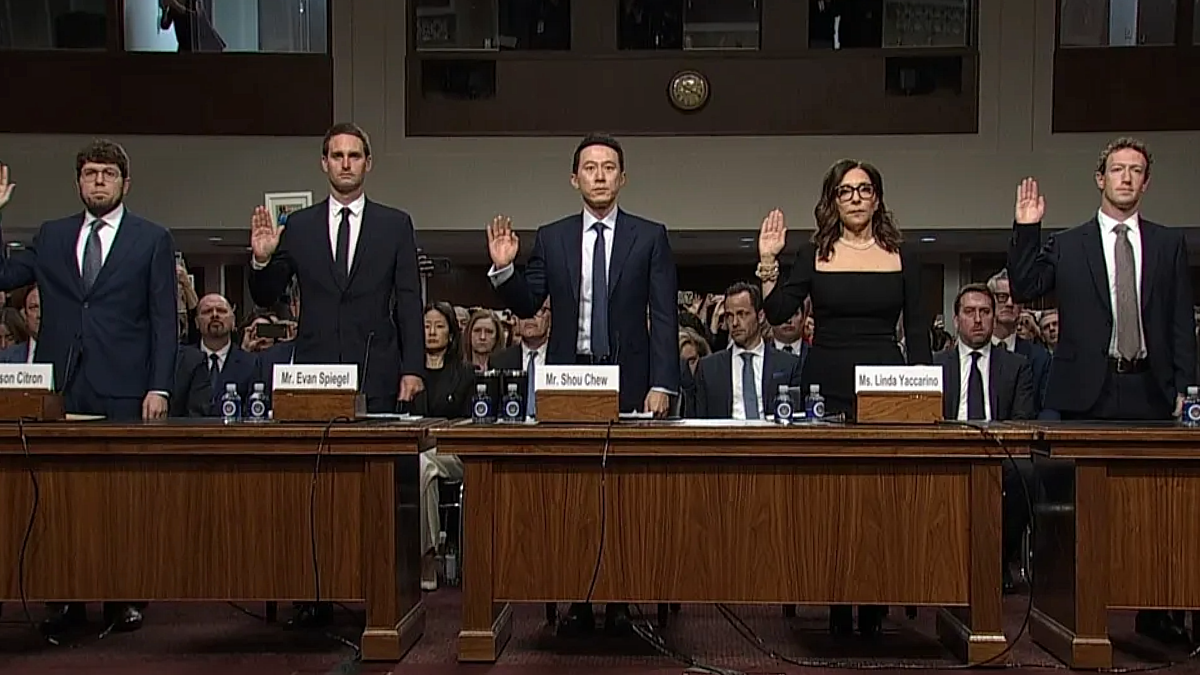

Tech CEOs testify to the Senate Judiciary Committe, January 31, 2024. (l-r) Jason Citron, Discord; Evan Spiegel, Snap; Shou Chew, TikTok; Linda Yaccarino, X; Mark Zuckerberg, Meta.

Wednesday is an important day for privacy and civil rights. This morning, the United States Senate Judiciary Committee is hearing from the CEOs of five prominent social media companies (TikTok, Meta, Snap, X, and Discord) about their efforts to protect kids on their platforms. And in the afternoon, the White House is convening experts to advise the Kids Online Health and Safety Task Force on the impact of social media and measures to protect children’s privacy. I’ll be participating in the White House convening, the first of its kind and a welcome sign of how much the American public needs interventions to protect our digital civil rights in the US.

The flurry of activity and attention on privacy is merited — in fact, it’s long overdue in the minds of those who’ve already sounded alarms about the harms of data extraction by government and the private sector. The congressional hearing and White House convening could be meaningful steps to address the grave threats posed to users of social media platforms.

We absolutely need to pressure tech companies to do better. In particular, they must reinvest in platform integrity and staff up this year. Free Press has studied the biggest social media companies’ practices. And unfortunately, as I detail in our recent report, Big Tech Backslide, these platforms have long failed to maintain the systems or human capital necessary to deliver on their promises to keep users safe. Worse still, over the last 14 months, Meta, X, and YouTube have all rolled back critical election-integrity policies and cut teams tasked with moderating content and safeguarding platform users.

The Senate and the White House are understandably focused on kids. Yet the issues raised by platforms’ cutbacks are an urgent concern not only for younger users’ safety online, but for the 2024 election cycle already in full swing in the US. There are broad implications for people of all ages and nationalities, as people in more than half of the world’s countries will vote in a major election this year. Congress should most immediately tackle these issues, including with the Senate Judiciary today, to doggedly seek insights and details on social media companies’ content-moderation policies, platform-integrity measures, and uses of AI as we head further into 2024. Members should ask these specific questions of the CEOs called to testify, as well as those developed by Tech Policy Press and Issue One.

Of course, these threats are not new. Since 2017, the US Congress has held roughly 40 hearings on the need for data privacy, many of those related to the need for protections for kids. And yet lawmakers have moved no meaningful legislation.

The challenge now before lawmakers and experts is to actualize big things here, to envision that a broader solutions approach — examining all of the potential harms of disinformation, discrimination, and data abuse — is profoundly more important as people across the planet face existential threats to democracy. Comprehensive privacy and civil rights protections are possible. They would also avoid the pitfalls of well-intentioned legislation that claims to reduce harm and remove harmful content but actually exposes everyone, including children and teens, to more invasive practices and government overreach. The most powerful step we can take now to rein in online manipulation is to introduce and pass robust federal data-privacy legislation that would limit the data collected about and then used against all of us.

Kids-Only Legislation Won’t Blunt Widespread Online Manipulation and Harm

I understand the urge to protect children from the harms that are present online. Children are a unique set of users who must be protected from abuse – and we must address these abuses head-on. However, the impacts that can come from targeted advertising, deceptive algorithms, widespread disinformation, and inconsistent content moderation are not felt only by children. Congress needs to measure these harms more broadly: from civil rights and election abuses to targeted harassment and hate. And efforts to protect young people should protect their data and their choices, but not harm their rights to expression, information, and community.

Privacy protections that only address young users leave out swaths of other vulnerable populations. We already know that companies that use any digital automation often train their algorithms using untold amounts of data gleaned from internet users. Even the most careful internet users have had their personal information scraped by often unscrupulous social-media companies, internet service providers, retailers, government agencies and more. This data can include users’ names, addresses, purchasing histories, financial information and other sensitive data such as Social Security numbers, medical records, and even people’s biometric data like fingerprints and iris recognition. Third-party data brokers often repackage and sell such content without our knowledge or consent.

Tech companies that deal in sophisticated algorithms often say they’re gathering this information to deliver hyper-personalized experiences for people, including easier online shopping, accurate rideshare locations and tailored streaming entertainment suggestions. But dangerous consequences flow from having advanced algorithms analyze our data. Companies can use this data processing to exclude specific users from receiving critical information, violating our civil rights online and off.

Algorithms make inferences about us based on our actual or perceived identities and preferences, but those are too often built on decades of institutionalized discrimination and segregation. Trained by humans using our language and patterns of behavior, algorithms carry offline biases into the online world. For example, an algorithm widely used in US hospitals to allocate health care to patients systematically discriminated against Black people, who were less likely than their equally sick white counterparts to be referred to hospitals. In 2022, Meta settled a lawsuit with the Department of Justice regarding its housing advertising tools, which could be used to exclude Black users seeing certain real estate ads on Facebook. And during the 2020 elections, Black, Indigenous and Latinx users were subjected to a sophisticated microtargeting scheme based on data collected about them — and targeted with deceptive content on social media about the voting process.

Free Press Action has been pushing for reforms for years, including introducing model legislation that explains how algorithmic discrimination and data privacy are civil-rights concerns. We’ve also worked with the Disinfo Defense League to help mobilize dozens of grassroots organizations behind a more just and democratic tech-policy platform.

We can advance solutions now, and I invite us to imagine comprehensive interventions that protect everyone. Robust and comprehensive privacy legislation must include provisions to limit the amount of data platforms can collect, process, and share with third parties, and prevent them from misusing the data they still do collect. It also must reduce the ability for companies to alter their terms of service without accessible, in-language notice to users with easy opt-outs. Meaningful platform accountability legislation should also increase transparency surrounding the ways that platforms acquire, use, and retain our personal information. Such legislative measures would substantially limit the ability of platforms to target users of all ages with harmful content, while protecting users from abusive surveillance practices.

Today’s attention on online privacy is more than warranted. The discussions we’re having are welcome and timely. But we can only talk so much before we must then chart a path toward action, including adopting policies that prioritize the digital civil rights of children and everyone else online. Yes, we should think big and we should focus on connecting these ideas to actual laws Congress must enact in 2024.

Authors