A Big Week for AI Policy in the Biden Administration

Ben Lennett / Mar 29, 2024

US President Joe Biden and Vice President Kamala Harris at the signing of an executive order on artificial intelligence, October 30, 2023. Source

It was AI policy week for the Biden Administration. Yesterday, Vice President Kamala Harris announced that the White House Office of Management and Budget (OMB) was “issuing OMB’s first government-wide policy to mitigate risks of artificial intelligence (AI) and harness its benefits.” The memorandum delivers on Biden’s AI Executive Order from October 2023 that required the agency to “issue guidance to agencies to strengthen the effective and appropriate use of AI, advance AI innovation, and manage risks from AI in the Federal Government.” On Wednesday, the National Telecommunications and Information Administration (NTIA) released an AI Accountability Policy Report that calls for a “set of accountability tools and information, an ecosystem of independent AI system evaluation, and consequences for those who fail to deliver on commitments or manage risks properly.”

Though the US continues to lag behind the European Union in adopting regulations to address the risks of artificial intelligence (earlier this month, the EU passed the AI Act), the two policy documents do represent the beginnings of a framework that, if implemented, could foster more responsible and accountable AI use and innovation. They offer complementary approaches leveraging the federal government's role as a purchaser and user of AI tools and systems and its role as a regulator across different sectors impacted by AI technology.

OMB Memorandum on Advancing Governance, Innovation, and Risk Management for Agency Use of Artificial Intelligence

The OMB memorandum directs federal agencies to implement several measures to strengthen AI governance, advance responsible AI innovation, and manage risks from the use of AI. As Maya Wiley, president and CEO of The Leadership Conference on Civil and Human Rights, noted in a public statement on the memo, “The guidance puts rights-protecting principles of the White House’s historic AI Bill of Rights into practice across agencies, and it is an important step in advancing civil rights protections in AI deployment at federal agencies. It extends existing civil rights protections, helping to bring them into the era of AI.”

The memo is largely focused on the federal government’s procurement and use of AI systems and implementing oversight and accountability measures to protect the public. Specific measures include:

- Requiring every federal agency to designate a Chief AI Officer (CAIO) within 60 days of issuance of the memorandum to work with other responsible officials to coordinate their agency’s use of AI, promote AI innovation, and manage risks from the use of AI.

- Requiring key agencies, including the Department of Commerce, Department of Homeland Security, and Department of Defense, to develop an enterprise strategy for how they will advance the responsible use of AI, including establishing “an AI Governance Board to convene relevant senior officials to govern the agency’s use of AI, including to remove barriers to the use of AI and to manage its associated risks.”

- Establishing minimum standards and practices “when using safety-impacting AI and rights-impacting AI” and “specific categories of AI that are presumed to impact rights and safety” as well as "a series of recommendations for managing AI risks in the context of Federal procurement.”

Beyond these agency-based approaches, the memorandum has broader implications for AI regulation. As Janet Haven writes for Tech Policy Press, the memo represents “an important stance that other branches of the US government, particularly Congress, should emulate: AI, in the here and now, can be governed. To govern AI means that as a society, we can set rules about how it can be used, in consultation with the people most impacted by those decisions.”

The new federal requirements also fit into a nationwide effort by many state and local governments to establish responsible use and procurement approaches. These efforts are happening parallel to policy conversations focused on adapting existing regulations and laws or creating new ones to manage the risk of AI systems and protect the public.

NTIA’s AI Accountability Policy Report

Related to those conversations, NTIA released a policy report on Wednesday that calls for a “set of accountability tools and information, an ecosystem of independent AI system evaluation, and consequences for those who fail to deliver on commitments or manage risks properly.” The report was primarily authored by Ellen P. Goodman, who was on leave from the Rutgers Institute for Information Policy & Law to serve as the agency's Senior Advisor for Algorithmic Justice and was informed by comments received by the agency as part of a request for comment (RFC) released in April 2023.

The request included 34 questions about potential AI governance methods to create accountability for AI system risks and harmful impacts. It focused on “self-regulatory, regulatory, and other measures and policies that are designed to provide reliable evidence to external stakeholders—that is, to provide assurance—that AI systems are legal, effective, ethical, safe, and otherwise trustworthy.” The agency received over 1,400 comments from NGOs and individuals. In addition, the agency met with interested parties and participated in and reviewed publicly available discussions related to issues discussed in the RFC.

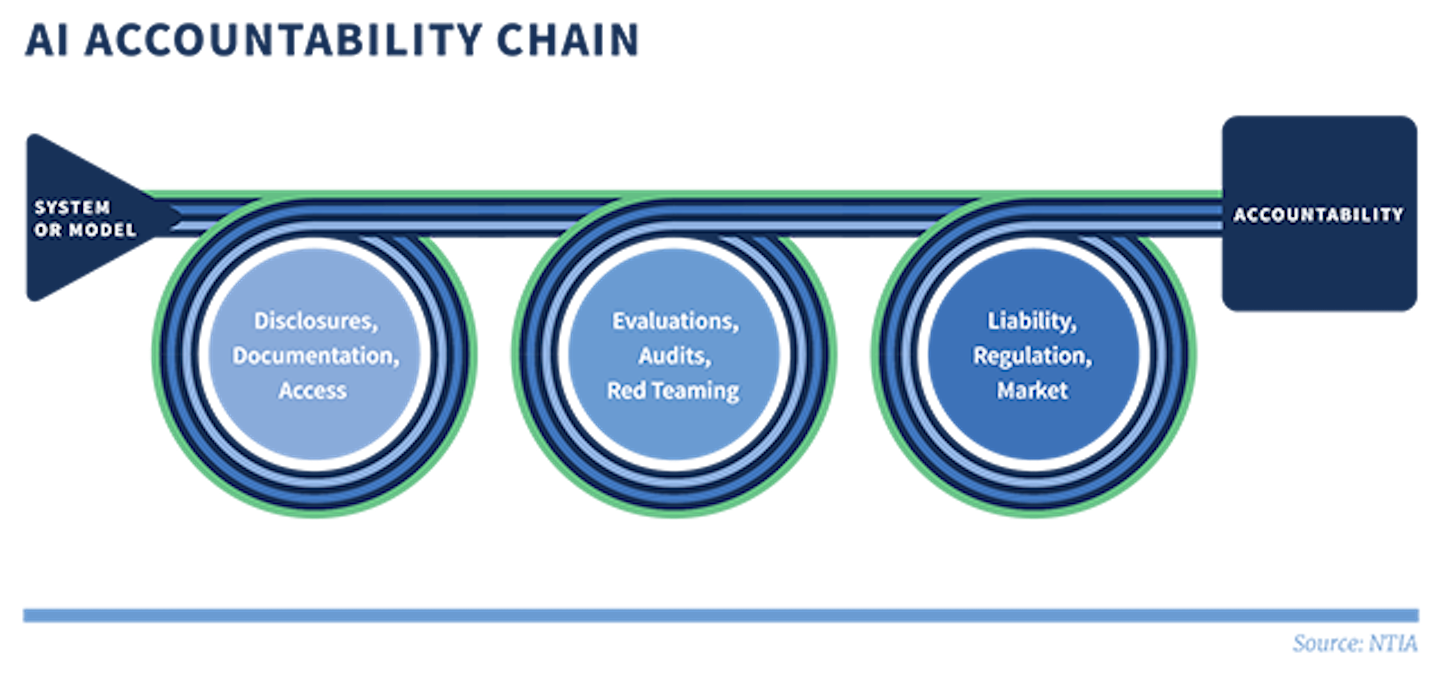

At the core of the report’s approach is the model of an accountability chain (see diagram below), where the first two links consist of accountability inputs for AI systems and tools. These include transparency elements such as disclosures, documentation, data access, and testing components such as evaluations, audits, and red teaming. Accountability inputs can then feed into structures that could “hold entities accountable for AI system impacts,” including liability regimes, regulatory enforcement, and market initiatives.

Diagram from the report

The report notes that “multiple policy interventions may be necessary...” to develop the proposed accountability regime. For example, a disclosure requirement for AI systems by itself will not foster accountability. Instead, “Disclosure… is an accountability input whose effectiveness depends on other policies or conditions, such as the governing liability framework, relevant regulation, and market forces (in particular, customers’ and consumers’ ability to use the information disclosed to make purchase and use decisions).”

The report recommends policymakers focus on interventions that provide guidance, support, and regulatory requirements to establish the envisioned accountability chain. Guidance measures include guidelines for AI audits and auditors, improved information disclosures, and adaptation of existing liability rules and standards. Support measures include investment in technical infrastructure, AI system access tools, personnel, and standards work. Finally, regulatory requirements include independent evaluation and inspection of high-risk AI systems and strengthening capacity to address cross-sectoral risks and practices related to AI.

What’s Next?

Considerable work still lies ahead to implement and develop the measures detailed in the OMB memo and the NTIA report. Still, they do represent progress in articulating a clear set of policy interventions, many of which can be enforced by federal agencies with existing authorities. Beyond the policy report, NTIA was also tasked by the AI Executive Order to “conduct a public consultation process and issue a report on the potential risks, benefits, other implications, and appropriate policy and regulatory approaches to dual-use foundation models for which the model weights are widely available.” These open-source AI models foster research, use, and innovation by academics and entrepreneurs but pose significant risks, given their potential for misuse. The consultation was recently closed, and NTIA will now work on developing the report.

In February 2024, the Commerce Department announced an executive team to lead the US AI Safety Institute (AISI) at the National Institute for Standards and Technology (NIST) as part of another Biden AI Executive Order measure. According to its inaugural director, Elizabeth Kelly, “The Safety Institute’s ambitious mandate to develop guidelines, evaluate models, and pursue fundamental research will be vital to addressing the risks and seizing the opportunities of AI.” NIST also created the US AI Safety Institute Consortium, which “brings together more than 200 organizations to develop science-based and empirically backed guidelines and standards for AI measurement and policy,” including testing protocols for AI safety.

Beyond the administration’s efforts, Congress is also holding hearings and discussing legislative approaches. Last year, the Senate held several insight forums to discuss AI. House leaders also recently formed a bipartisan task force to explore potential legislation to address concerns around artificial intelligence. Despite introducing several bills on the topic, none have yet to gain significant momentum.

Authors