If an Agent Extension Can Act as You, Marketplaces Need Minimum Duties

Kostakis Bouzoukas / Mar 6, 2026Kostakis Bouzoukas is a London based senior technologist and writer focused on operational governance for complex software ecosystems. Opinions expressed here are solely the author’s.

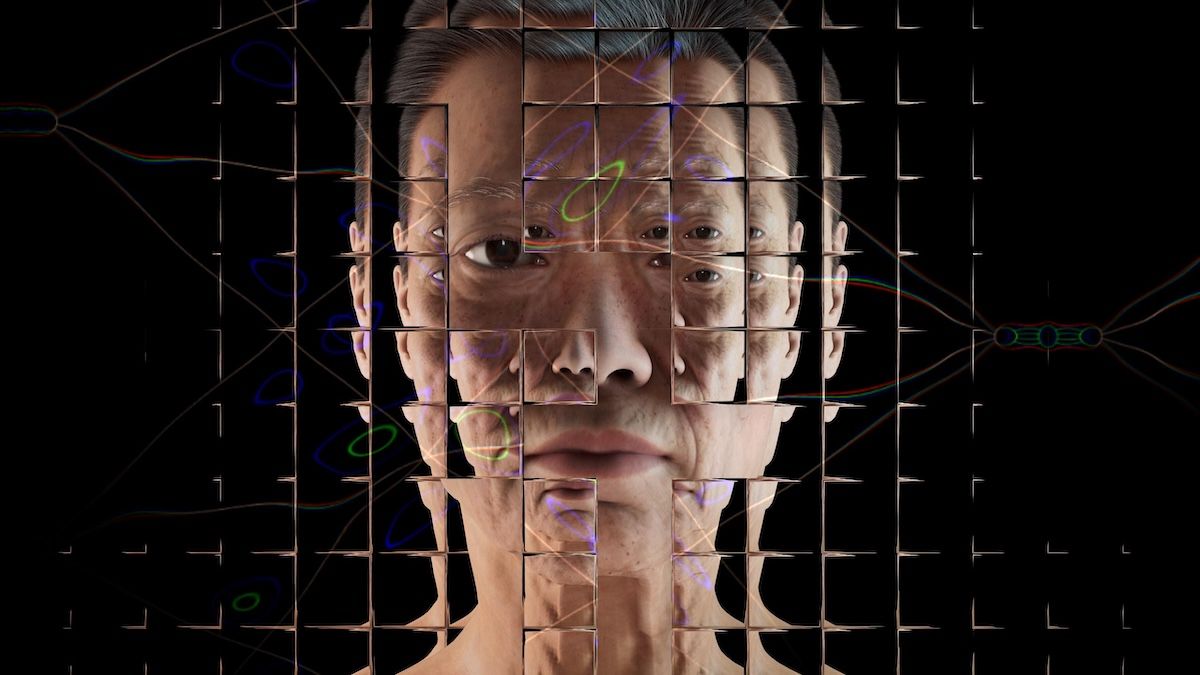

Image by Alan Warburton / © BBC / Better Images of AI / Virtual Human / CC-BY 4.0

In early February, VirusTotal, a malware-scanning and analysis service, reported detecting hundreds of malicious AI agent extensions in a public marketplace, where users install add-ons for agents. It would be easy to file this as a contained security problem in a single ecosystem. It may not be. It is a preview of what can break when a marketplace ships installable code that can act with a user’s access and permissions.

We are already seeing what that looks like. As the Verge reported, on OpenClaw’s marketplace, malicious add-ons were disguised as helpful tools, including ones used to deliver credential-stealing malware. Others prompted users to run commands that installed malware.

When automation goes wrong in the workplace

Two definitions clarify the issue. An AI agent is software that can take actions in other systems on your behalf, not just generate text. An agent extension is an installable add-on, sometimes called a skill, that gives the agent new actions it can perform, like reading files, sending messages, moving information between services, or running small programs. Think of an extension marketplace as an app store for actions, not for content.

Now consider a typical workplace scenario. A small finance team uses an agent to triage invoice emails, extract totals, and save file attachments to a shared drive. Someone installs a malicious or poorly designed extension that promises to reconcile invoices automatically. It asks for broad access to email and files, which looks reasonable in context. For a week, everything seems fine.

Then a supplier calls. A confidential attachment that should have stayed internal has been forwarded as part of the automation, and nobody can point to a clear record showing which extension ran, what it accessed, or what it sent. Worse, the marketplace cannot clearly confirm who published the extension, whether it changed after installation, who else might be exposed, or whether disabling it actually stops it.

Most organizations have faced a version of this problem before.

At that point, abstract debates about safety give way to more practical questions. Which extension ran and when? What access did it have? What did it change or send? When it was stopped, did it truly stop?

If the marketplace cannot produce a readable activity history and the supporting timestamps and access records, how can there be accountability? There is only a dispute that turns into guesswork.

Much of today’s debate about agent governance leaves a gap here. Identity and delegation frameworks help answer who acted and who authorized the action.

If a marketplace cannot answer four questions from its own records, it is not ready to distribute extensions that operate at scale with user access:

- What ran.

- What access it had at the time.

- What it changed.

- When it was stopped.

I work on platform governance, the rules and controls that make third-party integrations visible, controllable, and safer to scale. Extension marketplaces now sit at the center of that accountability chain.

The minimum duties for marketplaces

The goal of these marketplaces is not perfection. It is rapid containment and clear evidence. Stop the damage quickly, then show what happened without speculation.

In practical terms, that expectation translates into a small set of operational duties. At a minimum, any extension marketplace should be able to demonstrate the following during a complaint, investigation, or audit:

- Verified publisher signature. The marketplace can verify who published the extension, track its versions, and detect changes. Re-uploads do not hide the source.

- Clear access disclosures. The extension states, in plain language, what it can access and what it can do. By default, it receives only the permissions necessary to function.

- Restricted defaults for risky actions. Sensitive actions run in a limited mode by default, with controls that prevent rapid, repeated high-risk changes.

- User visible records. Users have a readable activity history showing what ran, what access it used, and what changed. Disputes can be checked against records.

- A working kill switch. The marketplace can disable an extension or a specific permission, quickly. When disabled, the extension actually stops running.

- Defined removal timelines and notification. If an extension is removed for abuse, the marketplace acts within a stated window and notifies affected users.

Such standards become optional unless they are visible. For that reason, marketplaces should publish two basic metrics and back them with records. These should include:

- Coverage: The percentage of extensions with verified publishers and clear access disclosures.

- Response: The typical time required to disable an extension so it stops running, and to remove it once deemed malicious.

Metrics alone can be gamed. Records are harder to fake. These numbers should be supported by underlying activity logs, timestamps, and access and version histories so an independent reviewer can sample cases and verify them. Coverage and response only matter if they help answer the four questions: what ran, what access it had, what changed, and when it was stopped.

Where government policy fits

Governments do not need to regulate AI models directly to make progress here. They can focus on the distribution layer that grants executable capabilities to agents.

Consumer protection can require clear access disclosures, user-facing activity records, a simple mechanism to report harmful listings and get them removed, and notification when an extension is removed for abuse. In the United States, enforcement could include the Federal Trade Commission and state attorneys general. Other countries have equivalent consumer protection authorities. This is not about naming and shaming. It is about comparability, so buyers and users can distinguish less accountable marketplaces from those that are safe enough to trust with user access.

Public procurement can also raise standards without writing new rules or passing legislation. If agents are used in public services, procurement contracts can require that any extension marketplace involved in the chain produce the necessary records and meet defined response expectations. Regulators in sensitive sectors can further require the same evidence before allowing agents and extensions into workflows involving finance, healthcare, or other critical services.

Standards bodies can make these duties testable and comparable across markets. The National Institute of Standards and Technology’s Center for AI Standards and Innovation (CAISI) is soliciting input on security considerations for AI agent systems, including measurement approaches, with comments due March 9, 2026. That forum offers an opportunity to highlight that “secure agents” includes marketplace duties and evidence requirements, not only model behavior.

Although minimum standards can become a moat when compliance turns into a proprietary certification badge, that risk is avoidable. Verified publishers, clear access disclosures, activity records, rapid disablement, and user notification are basic governance mechanisms. Smaller marketplaces can implement them, and independent reviewers can verify them without special insider access.

Accountability must be built in

Extension marketplaces are becoming the infrastructure for how AI systems and agents will act in the world. When those systems operate with user access, including reading files, sending messages, or moving data between services, the marketplace distributing that capability becomes part of the accountability chain.

The question is not whether incidents will occur. They will. The real question is whether marketplaces can produce clear evidence of what happened and stop harm quickly when they do. The solution is straightforward: define the minimum duties, keep the records that prove them, and publish the metrics that show whether they work.

Authors